Compare commits

48 Commits

v0.21.0

...

6b9b785b5c

| Author | SHA1 | Date | |

|---|---|---|---|

| 6b9b785b5c | |||

| 4c0a89f262 | |||

| 76b1ee2a00 | |||

| 771a38434f | |||

| 886d38620e | |||

| c7efaab30e | |||

| ff49454501 | |||

| 14273b4595 | |||

| abe7132630 | |||

| c1151519a0 | |||

| a1147ce609 | |||

| d907e79893 | |||

| 1b19d302c5 | |||

| 840b2b5809 | |||

| a6039cf563 | |||

| 8be7380b79 | |||

| afb8a84f7b | |||

| 6bf0cda16f | |||

| 5715ca6b74 | |||

| 8f465525f7 | |||

| f20dca2895 | |||

| 0c557e37ad | |||

| d0bfe8b10c | |||

| 28afc7e67d | |||

| 73c33bc8d2 | |||

| 476852e8f1 | |||

| e6cf00cb33 | |||

| d039d1e73d | |||

| d050ef568d | |||

| 028c2d83e9 | |||

| b5d6a6e8f2 | |||

| 5dfdbcce3a | |||

| 4fae40f66a | |||

| a1b947ffd6 | |||

| f9c7404bee | |||

| 5c1791d7f0 | |||

| e82617f6de | |||

| a7abc57f68 | |||

| cf1f523d03 | |||

| ccb255919a | |||

| b68c84b52e | |||

| 93cf0258c3 | |||

| b79fef1ca8 | |||

| 2b50de3186 | |||

| d8ef22db68 | |||

| 592f3b1555 | |||

| 3404469e2a | |||

| 63d7382dc9 |

8

.github/workflows/release.yml

vendored

@ -88,7 +88,9 @@ jobs:

|

||||

with:

|

||||

context: .

|

||||

push: true

|

||||

tags: infiniflow/ragflow:${{ env.RELEASE_TAG }}

|

||||

tags: |

|

||||

infiniflow/ragflow:${{ env.RELEASE_TAG }}

|

||||

infiniflow/ragflow:latest-full

|

||||

file: Dockerfile

|

||||

platforms: linux/amd64

|

||||

|

||||

@ -98,7 +100,9 @@ jobs:

|

||||

with:

|

||||

context: .

|

||||

push: true

|

||||

tags: infiniflow/ragflow:${{ env.RELEASE_TAG }}-slim

|

||||

tags: |

|

||||

infiniflow/ragflow:${{ env.RELEASE_TAG }}-slim

|

||||

infiniflow/ragflow:latest-slim

|

||||

file: Dockerfile

|

||||

build-args: LIGHTEN=1

|

||||

platforms: linux/amd64

|

||||

|

||||

@ -153,6 +153,16 @@ class Graph:

|

||||

def get_tenant_id(self):

|

||||

return self._tenant_id

|

||||

|

||||

def get_variable_value(self, exp: str) -> Any:

|

||||

exp = exp.strip("{").strip("}").strip(" ").strip("{").strip("}")

|

||||

if exp.find("@") < 0:

|

||||

return self.globals[exp]

|

||||

cpn_id, var_nm = exp.split("@")

|

||||

cpn = self.get_component(cpn_id)

|

||||

if not cpn:

|

||||

raise Exception(f"Can't find variable: '{cpn_id}@{var_nm}'")

|

||||

return cpn["obj"].output(var_nm)

|

||||

|

||||

|

||||

class Canvas(Graph):

|

||||

|

||||

@ -406,16 +416,6 @@ class Canvas(Graph):

|

||||

return False

|

||||

return True

|

||||

|

||||

def get_variable_value(self, exp: str) -> Any:

|

||||

exp = exp.strip("{").strip("}").strip(" ").strip("{").strip("}")

|

||||

if exp.find("@") < 0:

|

||||

return self.globals[exp]

|

||||

cpn_id, var_nm = exp.split("@")

|

||||

cpn = self.get_component(cpn_id)

|

||||

if not cpn:

|

||||

raise Exception(f"Can't find variable: '{cpn_id}@{var_nm}'")

|

||||

return cpn["obj"].output(var_nm)

|

||||

|

||||

def get_history(self, window_size):

|

||||

convs = []

|

||||

if window_size <= 0:

|

||||

|

||||

@ -102,6 +102,8 @@ class LLM(ComponentBase):

|

||||

|

||||

def get_input_elements(self) -> dict[str, Any]:

|

||||

res = self.get_input_elements_from_text(self._param.sys_prompt)

|

||||

if isinstance(self._param.prompts, str):

|

||||

self._param.prompts = [{"role": "user", "content": self._param.prompts}]

|

||||

for prompt in self._param.prompts:

|

||||

d = self.get_input_elements_from_text(prompt["content"])

|

||||

res.update(d)

|

||||

@ -113,6 +115,17 @@ class LLM(ComponentBase):

|

||||

def add2system_prompt(self, txt):

|

||||

self._param.sys_prompt += txt

|

||||

|

||||

def _sys_prompt_and_msg(self, msg, args):

|

||||

if isinstance(self._param.prompts, str):

|

||||

self._param.prompts = [{"role": "user", "content": self._param.prompts}]

|

||||

for p in self._param.prompts:

|

||||

if msg and msg[-1]["role"] == p["role"]:

|

||||

continue

|

||||

p = deepcopy(p)

|

||||

p["content"] = self.string_format(p["content"], args)

|

||||

msg.append(p)

|

||||

return msg, self.string_format(self._param.sys_prompt, args)

|

||||

|

||||

def _prepare_prompt_variables(self):

|

||||

if self._param.visual_files_var:

|

||||

self.imgs = self._canvas.get_variable_value(self._param.visual_files_var)

|

||||

@ -128,7 +141,6 @@ class LLM(ComponentBase):

|

||||

|

||||

args = {}

|

||||

vars = self.get_input_elements() if not self._param.debug_inputs else self._param.debug_inputs

|

||||

sys_prompt = self._param.sys_prompt

|

||||

for k, o in vars.items():

|

||||

args[k] = o["value"]

|

||||

if not isinstance(args[k], str):

|

||||

@ -138,16 +150,8 @@ class LLM(ComponentBase):

|

||||

args[k] = str(args[k])

|

||||

self.set_input_value(k, args[k])

|

||||

|

||||

msg = self._canvas.get_history(self._param.message_history_window_size)[:-1]

|

||||

for p in self._param.prompts:

|

||||

if msg and msg[-1]["role"] == p["role"]:

|

||||

continue

|

||||

msg.append(deepcopy(p))

|

||||

|

||||

sys_prompt = self.string_format(sys_prompt, args)

|

||||

msg, sys_prompt = self._sys_prompt_and_msg(self._canvas.get_history(self._param.message_history_window_size)[:-1], args)

|

||||

user_defined_prompt, sys_prompt = self._extract_prompts(sys_prompt)

|

||||

for m in msg:

|

||||

m["content"] = self.string_format(m["content"], args)

|

||||

if self._param.cite and self._canvas.get_reference()["chunks"]:

|

||||

sys_prompt += citation_prompt(user_defined_prompt)

|

||||

|

||||

|

||||

@ -83,7 +83,7 @@

|

||||

},

|

||||

"password": "20010812Yy!",

|

||||

"port": 3306,

|

||||

"sql": "Agent:WickedGoatsDivide@content",

|

||||

"sql": "{Agent:WickedGoatsDivide@content}",

|

||||

"username": "13637682833@163.com"

|

||||

}

|

||||

},

|

||||

@ -114,9 +114,7 @@

|

||||

"params": {

|

||||

"cross_languages": [],

|

||||

"empty_response": "",

|

||||

"kb_ids": [

|

||||

"ed31364c727211f0bdb2bafe6e7908e6"

|

||||

],

|

||||

"kb_ids": [],

|

||||

"keywords_similarity_weight": 0.7,

|

||||

"outputs": {

|

||||

"formalized_content": {

|

||||

@ -124,7 +122,7 @@

|

||||

"value": ""

|

||||

}

|

||||

},

|

||||

"query": "sys.query",

|

||||

"query": "{sys.query}",

|

||||

"rerank_id": "",

|

||||

"similarity_threshold": 0.2,

|

||||

"top_k": 1024,

|

||||

@ -145,9 +143,7 @@

|

||||

"params": {

|

||||

"cross_languages": [],

|

||||

"empty_response": "",

|

||||

"kb_ids": [

|

||||

"0f968106727311f08357bafe6e7908e6"

|

||||

],

|

||||

"kb_ids": [],

|

||||

"keywords_similarity_weight": 0.7,

|

||||

"outputs": {

|

||||

"formalized_content": {

|

||||

@ -155,7 +151,7 @@

|

||||

"value": ""

|

||||

}

|

||||

},

|

||||

"query": "sys.query",

|

||||

"query": "{sys.query}",

|

||||

"rerank_id": "",

|

||||

"similarity_threshold": 0.2,

|

||||

"top_k": 1024,

|

||||

@ -176,9 +172,7 @@

|

||||

"params": {

|

||||

"cross_languages": [],

|

||||

"empty_response": "",

|

||||

"kb_ids": [

|

||||

"4ad1f9d0727311f0827dbafe6e7908e6"

|

||||

],

|

||||

"kb_ids": [],

|

||||

"keywords_similarity_weight": 0.7,

|

||||

"outputs": {

|

||||

"formalized_content": {

|

||||

@ -186,7 +180,7 @@

|

||||

"value": ""

|

||||

}

|

||||

},

|

||||

"query": "sys.query",

|

||||

"query": "{sys.query}",

|

||||

"rerank_id": "",

|

||||

"similarity_threshold": 0.2,

|

||||

"top_k": 1024,

|

||||

@ -347,9 +341,7 @@

|

||||

"form": {

|

||||

"cross_languages": [],

|

||||

"empty_response": "",

|

||||

"kb_ids": [

|

||||

"ed31364c727211f0bdb2bafe6e7908e6"

|

||||

],

|

||||

"kb_ids": [],

|

||||

"keywords_similarity_weight": 0.7,

|

||||

"outputs": {

|

||||

"formalized_content": {

|

||||

@ -357,7 +349,7 @@

|

||||

"value": ""

|

||||

}

|

||||

},

|

||||

"query": "sys.query",

|

||||

"query": "{sys.query}",

|

||||

"rerank_id": "",

|

||||

"similarity_threshold": 0.2,

|

||||

"top_k": 1024,

|

||||

@ -387,9 +379,7 @@

|

||||

"form": {

|

||||

"cross_languages": [],

|

||||

"empty_response": "",

|

||||

"kb_ids": [

|

||||

"0f968106727311f08357bafe6e7908e6"

|

||||

],

|

||||

"kb_ids": [],

|

||||

"keywords_similarity_weight": 0.7,

|

||||

"outputs": {

|

||||

"formalized_content": {

|

||||

@ -397,7 +387,7 @@

|

||||

"value": ""

|

||||

}

|

||||

},

|

||||

"query": "sys.query",

|

||||

"query": "{sys.query}",

|

||||

"rerank_id": "",

|

||||

"similarity_threshold": 0.2,

|

||||

"top_k": 1024,

|

||||

@ -427,9 +417,7 @@

|

||||

"form": {

|

||||

"cross_languages": [],

|

||||

"empty_response": "",

|

||||

"kb_ids": [

|

||||

"4ad1f9d0727311f0827dbafe6e7908e6"

|

||||

],

|

||||

"kb_ids": [],

|

||||

"keywords_similarity_weight": 0.7,

|

||||

"outputs": {

|

||||

"formalized_content": {

|

||||

@ -437,7 +425,7 @@

|

||||

"value": ""

|

||||

}

|

||||

},

|

||||

"query": "sys.query",

|

||||

"query": "{sys.query}",

|

||||

"rerank_id": "",

|

||||

"similarity_threshold": 0.2,

|

||||

"top_k": 1024,

|

||||

@ -539,7 +527,7 @@

|

||||

},

|

||||

"password": "20010812Yy!",

|

||||

"port": 3306,

|

||||

"sql": "Agent:WickedGoatsDivide@content",

|

||||

"sql": "{Agent:WickedGoatsDivide@content}",

|

||||

"username": "13637682833@163.com"

|

||||

},

|

||||

"label": "ExeSQL",

|

||||

|

||||

@ -157,7 +157,7 @@ class CodeExec(ToolBase, ABC):

|

||||

|

||||

try:

|

||||

resp = requests.post(url=f"http://{settings.SANDBOX_HOST}:9385/run", json=code_req, timeout=os.environ.get("COMPONENT_EXEC_TIMEOUT", 10*60))

|

||||

logging.info(f"http://{settings.SANDBOX_HOST}:9385/run", code_req, resp.status_code)

|

||||

logging.info(f"http://{settings.SANDBOX_HOST}:9385/run, code_req: {code_req}, resp.status_code {resp.status_code}:")

|

||||

if resp.status_code != 200:

|

||||

resp.raise_for_status()

|

||||

body = resp.json()

|

||||

|

||||

@ -53,7 +53,7 @@ class ExeSQLParam(ToolParamBase):

|

||||

self.max_records = 1024

|

||||

|

||||

def check(self):

|

||||

self.check_valid_value(self.db_type, "Choose DB type", ['mysql', 'postgresql', 'mariadb', 'mssql'])

|

||||

self.check_valid_value(self.db_type, "Choose DB type", ['mysql', 'postgres', 'mariadb', 'mssql'])

|

||||

self.check_empty(self.database, "Database name")

|

||||

self.check_empty(self.username, "database username")

|

||||

self.check_empty(self.host, "IP Address")

|

||||

@ -111,7 +111,7 @@ class ExeSQL(ToolBase, ABC):

|

||||

if self._param.db_type in ["mysql", "mariadb"]:

|

||||

db = pymysql.connect(db=self._param.database, user=self._param.username, host=self._param.host,

|

||||

port=self._param.port, password=self._param.password)

|

||||

elif self._param.db_type == 'postgresql':

|

||||

elif self._param.db_type == 'postgres':

|

||||

db = psycopg2.connect(dbname=self._param.database, user=self._param.username, host=self._param.host,

|

||||

port=self._param.port, password=self._param.password)

|

||||

elif self._param.db_type == 'mssql':

|

||||

|

||||

@ -28,6 +28,8 @@ from api.db import CanvasCategory, FileType

|

||||

from api.db.services.canvas_service import CanvasTemplateService, UserCanvasService, API4ConversationService

|

||||

from api.db.services.document_service import DocumentService

|

||||

from api.db.services.file_service import FileService

|

||||

from api.db.services.pipeline_operation_log_service import PipelineOperationLogService

|

||||

from api.db.services.task_service import queue_dataflow, CANVAS_DEBUG_DOC_ID

|

||||

from api.db.services.user_service import TenantService

|

||||

from api.db.services.user_canvas_version import UserCanvasVersionService

|

||||

from api.settings import RetCode

|

||||

@ -39,6 +41,7 @@ from api.db.db_models import APIToken

|

||||

import time

|

||||

|

||||

from api.utils.file_utils import filename_type, read_potential_broken_pdf

|

||||

from rag.flow.pipeline import Pipeline

|

||||

from rag.utils.redis_conn import REDIS_CONN

|

||||

|

||||

|

||||

@ -48,14 +51,6 @@ def templates():

|

||||

return get_json_result(data=[c.to_dict() for c in CanvasTemplateService.query(canvas_category=CanvasCategory.Agent)])

|

||||

|

||||

|

||||

@manager.route('/list', methods=['GET']) # noqa: F821

|

||||

@login_required

|

||||

def canvas_list():

|

||||

return get_json_result(data=sorted([c.to_dict() for c in \

|

||||

UserCanvasService.query(user_id=current_user.id, canvas_category=CanvasCategory.Agent)], key=lambda x: x["update_time"]*-1)

|

||||

)

|

||||

|

||||

|

||||

@manager.route('/rm', methods=['POST']) # noqa: F821

|

||||

@validate_request("canvas_ids")

|

||||

@login_required

|

||||

@ -77,9 +72,10 @@ def save():

|

||||

if not isinstance(req["dsl"], str):

|

||||

req["dsl"] = json.dumps(req["dsl"], ensure_ascii=False)

|

||||

req["dsl"] = json.loads(req["dsl"])

|

||||

cate = req.get("canvas_category", CanvasCategory.Agent)

|

||||

if "id" not in req:

|

||||

req["user_id"] = current_user.id

|

||||

if UserCanvasService.query(user_id=current_user.id, title=req["title"].strip(), canvas_category=CanvasCategory.Agent):

|

||||

if UserCanvasService.query(user_id=current_user.id, title=req["title"].strip(), canvas_category=cate):

|

||||

return get_data_error_result(message=f"{req['title'].strip()} already exists.")

|

||||

req["id"] = get_uuid()

|

||||

if not UserCanvasService.save(**req):

|

||||

@ -148,6 +144,14 @@ def run():

|

||||

if not isinstance(cvs.dsl, str):

|

||||

cvs.dsl = json.dumps(cvs.dsl, ensure_ascii=False)

|

||||

|

||||

if cvs.canvas_category == CanvasCategory.DataFlow:

|

||||

task_id = get_uuid()

|

||||

Pipeline(cvs.dsl, tenant_id=current_user.id, doc_id=CANVAS_DEBUG_DOC_ID, task_id=task_id, flow_id=req["id"])

|

||||

ok, error_message = queue_dataflow(tenant_id=user_id, flow_id=req["id"], task_id=task_id, file=files[0], priority=0)

|

||||

if not ok:

|

||||

return get_data_error_result(message=error_message)

|

||||

return get_json_result(data={"message_id": task_id})

|

||||

|

||||

try:

|

||||

canvas = Canvas(cvs.dsl, current_user.id, req["id"])

|

||||

except Exception as e:

|

||||

@ -173,6 +177,35 @@ def run():

|

||||

return resp

|

||||

|

||||

|

||||

@manager.route('/rerun', methods=['POST']) # noqa: F821

|

||||

@validate_request("id", "dsl", "component_id")

|

||||

@login_required

|

||||

def rerun():

|

||||

req = request.json

|

||||

doc = PipelineOperationLogService.get_documents_info(req["id"])

|

||||

if not doc:

|

||||

return get_data_error_result(message="Document not found.")

|

||||

doc = doc[0]

|

||||

if 0 < doc["progress"] < 1:

|

||||

return get_data_error_result(message=f"`{doc['name']}` is processing...")

|

||||

|

||||

dsl = req["dsl"]

|

||||

dsl["path"] = [req["component_id"]]

|

||||

PipelineOperationLogService.update_by_id(req["id"], {"dsl": dsl})

|

||||

queue_dataflow(tenant_id=current_user.id, flow_id=req["id"], task_id=get_uuid(), doc_id=doc["id"], priority=0, rerun=True)

|

||||

return get_json_result(data=True)

|

||||

|

||||

|

||||

@manager.route('/cancel/<task_id>', methods=['PUT']) # noqa: F821

|

||||

@login_required

|

||||

def cancel(task_id):

|

||||

try:

|

||||

REDIS_CONN.set(f"{task_id}-cancel", "x")

|

||||

except Exception as e:

|

||||

logging.exception(e)

|

||||

return get_json_result(data=True)

|

||||

|

||||

|

||||

@manager.route('/reset', methods=['POST']) # noqa: F821

|

||||

@validate_request("id")

|

||||

@login_required

|

||||

@ -332,7 +365,7 @@ def test_db_connect():

|

||||

if req["db_type"] in ["mysql", "mariadb"]:

|

||||

db = MySQLDatabase(req["database"], user=req["username"], host=req["host"], port=req["port"],

|

||||

password=req["password"])

|

||||

elif req["db_type"] == 'postgresql':

|

||||

elif req["db_type"] == 'postgres':

|

||||

db = PostgresqlDatabase(req["database"], user=req["username"], host=req["host"], port=req["port"],

|

||||

password=req["password"])

|

||||

elif req["db_type"] == 'mssql':

|

||||

@ -383,22 +416,31 @@ def getversion( version_id):

|

||||

return get_json_result(data=f"Error getting history file: {e}")

|

||||

|

||||

|

||||

@manager.route('/listteam', methods=['GET']) # noqa: F821

|

||||

@manager.route('/list', methods=['GET']) # noqa: F821

|

||||

@login_required

|

||||

def list_canvas():

|

||||

keywords = request.args.get("keywords", "")

|

||||

page_number = int(request.args.get("page", 1))

|

||||

items_per_page = int(request.args.get("page_size", 150))

|

||||

orderby = request.args.get("orderby", "create_time")

|

||||

desc = request.args.get("desc", True)

|

||||

try:

|

||||

canvas_category = request.args.get("canvas_category")

|

||||

if request.args.get("desc", "true").lower() == "false":

|

||||

desc = False

|

||||

else:

|

||||

desc = True

|

||||

owner_ids = request.args.get("owner_ids", [])

|

||||

if not owner_ids:

|

||||

tenants = TenantService.get_joined_tenants_by_user_id(current_user.id)

|

||||

tenants = [m["tenant_id"] for m in tenants]

|

||||

canvas, total = UserCanvasService.get_by_tenant_ids(

|

||||

[m["tenant_id"] for m in tenants], current_user.id, page_number,

|

||||

items_per_page, orderby, desc, keywords, canvas_category=CanvasCategory.Agent)

|

||||

return get_json_result(data={"canvas": canvas, "total": total})

|

||||

except Exception as e:

|

||||

return server_error_response(e)

|

||||

tenants, current_user.id, page_number,

|

||||

items_per_page, orderby, desc, keywords, canvas_category)

|

||||

else:

|

||||

tenants = owner_ids

|

||||

canvas, total = UserCanvasService.get_by_tenant_ids(

|

||||

tenants, current_user.id, 0,

|

||||

0, orderby, desc, keywords, canvas_category)

|

||||

return get_json_result(data={"canvas": canvas, "total": total})

|

||||

|

||||

|

||||

@manager.route('/setting', methods=['POST']) # noqa: F821

|

||||

|

||||

@ -1,353 +0,0 @@

|

||||

#

|

||||

# Copyright 2024 The InfiniFlow Authors. All Rights Reserved.

|

||||

#

|

||||

# Licensed under the Apache License, Version 2.0 (the "License");

|

||||

# you may not use this file except in compliance with the License.

|

||||

# You may obtain a copy of the License at

|

||||

#

|

||||

# http://www.apache.org/licenses/LICENSE-2.0

|

||||

#

|

||||

# Unless required by applicable law or agreed to in writing, software

|

||||

# distributed under the License is distributed on an "AS IS" BASIS,

|

||||

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

||||

# See the License for the specific language governing permissions and

|

||||

# limitations under the License.

|

||||

#

|

||||

import json

|

||||

import re

|

||||

import sys

|

||||

import time

|

||||

from functools import partial

|

||||

|

||||

import trio

|

||||

from flask import request

|

||||

from flask_login import current_user, login_required

|

||||

|

||||

from agent.canvas import Canvas

|

||||

from agent.component import LLM

|

||||

from api.db import CanvasCategory, FileType

|

||||

from api.db.services.canvas_service import CanvasTemplateService, UserCanvasService

|

||||

from api.db.services.document_service import DocumentService

|

||||

from api.db.services.file_service import FileService

|

||||

from api.db.services.task_service import queue_dataflow

|

||||

from api.db.services.user_canvas_version import UserCanvasVersionService

|

||||

from api.db.services.user_service import TenantService

|

||||

from api.settings import RetCode

|

||||

from api.utils import get_uuid

|

||||

from api.utils.api_utils import get_data_error_result, get_json_result, server_error_response, validate_request

|

||||

from api.utils.file_utils import filename_type, read_potential_broken_pdf

|

||||

from rag.flow.pipeline import Pipeline

|

||||

|

||||

|

||||

@manager.route("/templates", methods=["GET"]) # noqa: F821

|

||||

@login_required

|

||||

def templates():

|

||||

return get_json_result(data=[c.to_dict() for c in CanvasTemplateService.query(canvas_category=CanvasCategory.DataFlow)])

|

||||

|

||||

|

||||

@manager.route("/list", methods=["GET"]) # noqa: F821

|

||||

@login_required

|

||||

def canvas_list():

|

||||

return get_json_result(data=sorted([c.to_dict() for c in UserCanvasService.query(user_id=current_user.id, canvas_category=CanvasCategory.DataFlow)], key=lambda x: x["update_time"] * -1))

|

||||

|

||||

|

||||

@manager.route("/rm", methods=["POST"]) # noqa: F821

|

||||

@validate_request("canvas_ids")

|

||||

@login_required

|

||||

def rm():

|

||||

for i in request.json["canvas_ids"]:

|

||||

if not UserCanvasService.accessible(i, current_user.id):

|

||||

return get_json_result(data=False, message="Only owner of canvas authorized for this operation.", code=RetCode.OPERATING_ERROR)

|

||||

UserCanvasService.delete_by_id(i)

|

||||

return get_json_result(data=True)

|

||||

|

||||

|

||||

@manager.route("/set", methods=["POST"]) # noqa: F821

|

||||

@validate_request("dsl", "title")

|

||||

@login_required

|

||||

def save():

|

||||

req = request.json

|

||||

if not isinstance(req["dsl"], str):

|

||||

req["dsl"] = json.dumps(req["dsl"], ensure_ascii=False)

|

||||

req["dsl"] = json.loads(req["dsl"])

|

||||

req["canvas_category"] = CanvasCategory.DataFlow

|

||||

if "id" not in req:

|

||||

req["user_id"] = current_user.id

|

||||

if UserCanvasService.query(user_id=current_user.id, title=req["title"].strip(), canvas_category=CanvasCategory.DataFlow):

|

||||

return get_data_error_result(message=f"{req['title'].strip()} already exists.")

|

||||

req["id"] = get_uuid()

|

||||

|

||||

if not UserCanvasService.save(**req):

|

||||

return get_data_error_result(message="Fail to save canvas.")

|

||||

else:

|

||||

if not UserCanvasService.accessible(req["id"], current_user.id):

|

||||

return get_json_result(data=False, message="Only owner of canvas authorized for this operation.", code=RetCode.OPERATING_ERROR)

|

||||

UserCanvasService.update_by_id(req["id"], req)

|

||||

# save version

|

||||

UserCanvasVersionService.insert(user_canvas_id=req["id"], dsl=req["dsl"], title="{0}_{1}".format(req["title"], time.strftime("%Y_%m_%d_%H_%M_%S")))

|

||||

UserCanvasVersionService.delete_all_versions(req["id"])

|

||||

return get_json_result(data=req)

|

||||

|

||||

|

||||

@manager.route("/get/<canvas_id>", methods=["GET"]) # noqa: F821

|

||||

@login_required

|

||||

def get(canvas_id):

|

||||

if not UserCanvasService.accessible(canvas_id, current_user.id):

|

||||

return get_data_error_result(message="canvas not found.")

|

||||

e, c = UserCanvasService.get_by_tenant_id(canvas_id)

|

||||

return get_json_result(data=c)

|

||||

|

||||

|

||||

@manager.route("/run", methods=["POST"]) # noqa: F821

|

||||

@validate_request("id")

|

||||

@login_required

|

||||

def run():

|

||||

req = request.json

|

||||

flow_id = req.get("id", "")

|

||||

doc_id = req.get("doc_id", "")

|

||||

if not all([flow_id, doc_id]):

|

||||

return get_data_error_result(message="id and doc_id are required.")

|

||||

|

||||

if not DocumentService.get_by_id(doc_id):

|

||||

return get_data_error_result(message=f"Document for {doc_id} not found.")

|

||||

|

||||

user_id = req.get("user_id", current_user.id)

|

||||

if not UserCanvasService.accessible(flow_id, current_user.id):

|

||||

return get_json_result(data=False, message="Only owner of canvas authorized for this operation.", code=RetCode.OPERATING_ERROR)

|

||||

|

||||

e, cvs = UserCanvasService.get_by_id(flow_id)

|

||||

if not e:

|

||||

return get_data_error_result(message="canvas not found.")

|

||||

|

||||

if not isinstance(cvs.dsl, str):

|

||||

cvs.dsl = json.dumps(cvs.dsl, ensure_ascii=False)

|

||||

|

||||

task_id = get_uuid()

|

||||

|

||||

ok, error_message = queue_dataflow(dsl=cvs.dsl, tenant_id=user_id, doc_id=doc_id, task_id=task_id, flow_id=flow_id, priority=0)

|

||||

if not ok:

|

||||

return server_error_response(error_message)

|

||||

|

||||

return get_json_result(data={"task_id": task_id, "flow_id": flow_id})

|

||||

|

||||

|

||||

@manager.route("/reset", methods=["POST"]) # noqa: F821

|

||||

@validate_request("id")

|

||||

@login_required

|

||||

def reset():

|

||||

req = request.json

|

||||

flow_id = req.get("id", "")

|

||||

if not flow_id:

|

||||

return get_data_error_result(message="id is required.")

|

||||

|

||||

if not UserCanvasService.accessible(flow_id, current_user.id):

|

||||

return get_json_result(data=False, message="Only owner of canvas authorized for this operation.", code=RetCode.OPERATING_ERROR)

|

||||

|

||||

task_id = req.get("task_id", "")

|

||||

|

||||

try:

|

||||

e, user_canvas = UserCanvasService.get_by_id(req["id"])

|

||||

if not e:

|

||||

return get_data_error_result(message="canvas not found.")

|

||||

|

||||

dataflow = Pipeline(dsl=json.dumps(user_canvas.dsl), tenant_id=current_user.id, flow_id=flow_id, task_id=task_id)

|

||||

dataflow.reset()

|

||||

req["dsl"] = json.loads(str(dataflow))

|

||||

UserCanvasService.update_by_id(req["id"], {"dsl": req["dsl"]})

|

||||

return get_json_result(data=req["dsl"])

|

||||

except Exception as e:

|

||||

return server_error_response(e)

|

||||

|

||||

|

||||

@manager.route("/upload/<canvas_id>", methods=["POST"]) # noqa: F821

|

||||

def upload(canvas_id):

|

||||

e, cvs = UserCanvasService.get_by_tenant_id(canvas_id)

|

||||

if not e:

|

||||

return get_data_error_result(message="canvas not found.")

|

||||

|

||||

user_id = cvs["user_id"]

|

||||

|

||||

def structured(filename, filetype, blob, content_type):

|

||||

nonlocal user_id

|

||||

if filetype == FileType.PDF.value:

|

||||

blob = read_potential_broken_pdf(blob)

|

||||

|

||||

location = get_uuid()

|

||||

FileService.put_blob(user_id, location, blob)

|

||||

|

||||

return {

|

||||

"id": location,

|

||||

"name": filename,

|

||||

"size": sys.getsizeof(blob),

|

||||

"extension": filename.split(".")[-1].lower(),

|

||||

"mime_type": content_type,

|

||||

"created_by": user_id,

|

||||

"created_at": time.time(),

|

||||

"preview_url": None,

|

||||

}

|

||||

|

||||

if request.args.get("url"):

|

||||

from crawl4ai import AsyncWebCrawler, BrowserConfig, CrawlerRunConfig, CrawlResult, DefaultMarkdownGenerator, PruningContentFilter

|

||||

|

||||

try:

|

||||

url = request.args.get("url")

|

||||

filename = re.sub(r"\?.*", "", url.split("/")[-1])

|

||||

|

||||

async def adownload():

|

||||

browser_config = BrowserConfig(

|

||||

headless=True,

|

||||

verbose=False,

|

||||

)

|

||||

async with AsyncWebCrawler(config=browser_config) as crawler:

|

||||

crawler_config = CrawlerRunConfig(markdown_generator=DefaultMarkdownGenerator(content_filter=PruningContentFilter()), pdf=True, screenshot=False)

|

||||

result: CrawlResult = await crawler.arun(url=url, config=crawler_config)

|

||||

return result

|

||||

|

||||

page = trio.run(adownload())

|

||||

if page.pdf:

|

||||

if filename.split(".")[-1].lower() != "pdf":

|

||||

filename += ".pdf"

|

||||

return get_json_result(data=structured(filename, "pdf", page.pdf, page.response_headers["content-type"]))

|

||||

|

||||

return get_json_result(data=structured(filename, "html", str(page.markdown).encode("utf-8"), page.response_headers["content-type"], user_id))

|

||||

|

||||

except Exception as e:

|

||||

return server_error_response(e)

|

||||

|

||||

file = request.files["file"]

|

||||

try:

|

||||

DocumentService.check_doc_health(user_id, file.filename)

|

||||

return get_json_result(data=structured(file.filename, filename_type(file.filename), file.read(), file.content_type))

|

||||

except Exception as e:

|

||||

return server_error_response(e)

|

||||

|

||||

|

||||

@manager.route("/input_form", methods=["GET"]) # noqa: F821

|

||||

@login_required

|

||||

def input_form():

|

||||

flow_id = request.args.get("id")

|

||||

cpn_id = request.args.get("component_id")

|

||||

try:

|

||||

e, user_canvas = UserCanvasService.get_by_id(flow_id)

|

||||

if not e:

|

||||

return get_data_error_result(message="canvas not found.")

|

||||

if not UserCanvasService.query(user_id=current_user.id, id=flow_id):

|

||||

return get_json_result(data=False, message="Only owner of canvas authorized for this operation.", code=RetCode.OPERATING_ERROR)

|

||||

|

||||

dataflow = Pipeline(dsl=json.dumps(user_canvas.dsl), tenant_id=current_user.id, flow_id=flow_id, task_id="")

|

||||

|

||||

return get_json_result(data=dataflow.get_component_input_form(cpn_id))

|

||||

except Exception as e:

|

||||

return server_error_response(e)

|

||||

|

||||

|

||||

@manager.route("/debug", methods=["POST"]) # noqa: F821

|

||||

@validate_request("id", "component_id", "params")

|

||||

@login_required

|

||||

def debug():

|

||||

req = request.json

|

||||

if not UserCanvasService.accessible(req["id"], current_user.id):

|

||||

return get_json_result(data=False, message="Only owner of canvas authorized for this operation.", code=RetCode.OPERATING_ERROR)

|

||||

try:

|

||||

e, user_canvas = UserCanvasService.get_by_id(req["id"])

|

||||

canvas = Canvas(json.dumps(user_canvas.dsl), current_user.id)

|

||||

canvas.reset()

|

||||

canvas.message_id = get_uuid()

|

||||

component = canvas.get_component(req["component_id"])["obj"]

|

||||

component.reset()

|

||||

|

||||

if isinstance(component, LLM):

|

||||

component.set_debug_inputs(req["params"])

|

||||

component.invoke(**{k: o["value"] for k, o in req["params"].items()})

|

||||

outputs = component.output()

|

||||

for k in outputs.keys():

|

||||

if isinstance(outputs[k], partial):

|

||||

txt = ""

|

||||

for c in outputs[k]():

|

||||

txt += c

|

||||

outputs[k] = txt

|

||||

return get_json_result(data=outputs)

|

||||

except Exception as e:

|

||||

return server_error_response(e)

|

||||

|

||||

|

||||

# api get list version dsl of canvas

|

||||

@manager.route("/getlistversion/<canvas_id>", methods=["GET"]) # noqa: F821

|

||||

@login_required

|

||||

def getlistversion(canvas_id):

|

||||

try:

|

||||

list = sorted([c.to_dict() for c in UserCanvasVersionService.list_by_canvas_id(canvas_id)], key=lambda x: x["update_time"] * -1)

|

||||

return get_json_result(data=list)

|

||||

except Exception as e:

|

||||

return get_data_error_result(message=f"Error getting history files: {e}")

|

||||

|

||||

|

||||

# api get version dsl of canvas

|

||||

@manager.route("/getversion/<version_id>", methods=["GET"]) # noqa: F821

|

||||

@login_required

|

||||

def getversion(version_id):

|

||||

try:

|

||||

e, version = UserCanvasVersionService.get_by_id(version_id)

|

||||

if version:

|

||||

return get_json_result(data=version.to_dict())

|

||||

except Exception as e:

|

||||

return get_json_result(data=f"Error getting history file: {e}")

|

||||

|

||||

|

||||

@manager.route("/listteam", methods=["GET"]) # noqa: F821

|

||||

@login_required

|

||||

def list_canvas():

|

||||

keywords = request.args.get("keywords", "")

|

||||

page_number = int(request.args.get("page", 1))

|

||||

items_per_page = int(request.args.get("page_size", 150))

|

||||

orderby = request.args.get("orderby", "create_time")

|

||||

desc = request.args.get("desc", True)

|

||||

try:

|

||||

tenants = TenantService.get_joined_tenants_by_user_id(current_user.id)

|

||||

canvas, total = UserCanvasService.get_by_tenant_ids(

|

||||

[m["tenant_id"] for m in tenants], current_user.id, page_number, items_per_page, orderby, desc, keywords, canvas_category=CanvasCategory.DataFlow

|

||||

)

|

||||

return get_json_result(data={"canvas": canvas, "total": total})

|

||||

except Exception as e:

|

||||

return server_error_response(e)

|

||||

|

||||

|

||||

@manager.route("/setting", methods=["POST"]) # noqa: F821

|

||||

@validate_request("id", "title", "permission")

|

||||

@login_required

|

||||

def setting():

|

||||

req = request.json

|

||||

req["user_id"] = current_user.id

|

||||

|

||||

if not UserCanvasService.accessible(req["id"], current_user.id):

|

||||

return get_json_result(data=False, message="Only owner of canvas authorized for this operation.", code=RetCode.OPERATING_ERROR)

|

||||

|

||||

e, flow = UserCanvasService.get_by_id(req["id"])

|

||||

if not e:

|

||||

return get_data_error_result(message="canvas not found.")

|

||||

flow = flow.to_dict()

|

||||

flow["title"] = req["title"]

|

||||

for key in ("description", "permission", "avatar"):

|

||||

if value := req.get(key):

|

||||

flow[key] = value

|

||||

|

||||

num = UserCanvasService.update_by_id(req["id"], flow)

|

||||

return get_json_result(data=num)

|

||||

|

||||

|

||||

@manager.route("/trace", methods=["GET"]) # noqa: F821

|

||||

def trace():

|

||||

dataflow_id = request.args.get("dataflow_id")

|

||||

task_id = request.args.get("task_id")

|

||||

if not all([dataflow_id, task_id]):

|

||||

return get_data_error_result(message="dataflow_id and task_id are required.")

|

||||

|

||||

e, dataflow_canvas = UserCanvasService.get_by_id(dataflow_id)

|

||||

if not e:

|

||||

return get_data_error_result(message="dataflow not found.")

|

||||

|

||||

dsl_str = json.dumps(dataflow_canvas.dsl, ensure_ascii=False)

|

||||

dataflow = Pipeline(dsl=dsl_str, tenant_id=dataflow_canvas.user_id, flow_id=dataflow_id, task_id=task_id)

|

||||

log = dataflow.fetch_logs()

|

||||

|

||||

return get_json_result(data=log)

|

||||

@ -32,7 +32,7 @@ from api.db.services.document_service import DocumentService, doc_upload_and_par

|

||||

from api.db.services.file2document_service import File2DocumentService

|

||||

from api.db.services.file_service import FileService

|

||||

from api.db.services.knowledgebase_service import KnowledgebaseService

|

||||

from api.db.services.task_service import TaskService, cancel_all_task_of, queue_tasks

|

||||

from api.db.services.task_service import TaskService, cancel_all_task_of, queue_tasks, queue_dataflow

|

||||

from api.db.services.user_service import UserTenantService

|

||||

from api.utils import get_uuid

|

||||

from api.utils.api_utils import (

|

||||

@ -182,6 +182,7 @@ def create():

|

||||

"id": get_uuid(),

|

||||

"kb_id": kb.id,

|

||||

"parser_id": kb.parser_id,

|

||||

"pipeline_id": kb.pipeline_id,

|

||||

"parser_config": kb.parser_config,

|

||||

"created_by": current_user.id,

|

||||

"type": FileType.VIRTUAL,

|

||||

@ -479,8 +480,11 @@ def run():

|

||||

kb_table_num_map[kb_id] = count

|

||||

if kb_table_num_map[kb_id] <= 0:

|

||||

KnowledgebaseService.delete_field_map(kb_id)

|

||||

bucket, name = File2DocumentService.get_storage_address(doc_id=doc["id"])

|

||||

queue_tasks(doc, bucket, name, 0)

|

||||

if doc.get("pipeline_id", ""):

|

||||

queue_dataflow(tenant_id, flow_id=doc["pipeline_id"], task_id=get_uuid(), doc_id=id)

|

||||

else:

|

||||

bucket, name = File2DocumentService.get_storage_address(doc_id=doc["id"])

|

||||

queue_tasks(doc, bucket, name, 0)

|

||||

|

||||

return get_json_result(data=True)

|

||||

except Exception as e:

|

||||

@ -546,31 +550,22 @@ def get(doc_id):

|

||||

|

||||

@manager.route("/change_parser", methods=["POST"]) # noqa: F821

|

||||

@login_required

|

||||

@validate_request("doc_id", "parser_id")

|

||||

@validate_request("doc_id")

|

||||

def change_parser():

|

||||

req = request.json

|

||||

|

||||

if not DocumentService.accessible(req["doc_id"], current_user.id):

|

||||

return get_json_result(data=False, message="No authorization.", code=settings.RetCode.AUTHENTICATION_ERROR)

|

||||

try:

|

||||

e, doc = DocumentService.get_by_id(req["doc_id"])

|

||||

if not e:

|

||||

return get_data_error_result(message="Document not found!")

|

||||

if doc.parser_id.lower() == req["parser_id"].lower():

|

||||

if "parser_config" in req:

|

||||

if req["parser_config"] == doc.parser_config:

|

||||

return get_json_result(data=True)

|

||||

else:

|

||||

return get_json_result(data=True)

|

||||

|

||||

if (doc.type == FileType.VISUAL and req["parser_id"] != "picture") or (re.search(r"\.(ppt|pptx|pages)$", doc.name) and req["parser_id"] != "presentation"):

|

||||

return get_data_error_result(message="Not supported yet!")

|

||||

e, doc = DocumentService.get_by_id(req["doc_id"])

|

||||

if not e:

|

||||

return get_data_error_result(message="Document not found!")

|

||||

|

||||

def reset_doc():

|

||||

nonlocal doc

|

||||

e = DocumentService.update_by_id(doc.id, {"parser_id": req["parser_id"], "progress": 0, "progress_msg": "", "run": TaskStatus.UNSTART.value})

|

||||

if not e:

|

||||

return get_data_error_result(message="Document not found!")

|

||||

if "parser_config" in req:

|

||||

DocumentService.update_parser_config(doc.id, req["parser_config"])

|

||||

if doc.token_num > 0:

|

||||

e = DocumentService.increment_chunk_num(doc.id, doc.kb_id, doc.token_num * -1, doc.chunk_num * -1, doc.process_duration * -1)

|

||||

if not e:

|

||||

@ -581,6 +576,26 @@ def change_parser():

|

||||

if settings.docStoreConn.indexExist(search.index_name(tenant_id), doc.kb_id):

|

||||

settings.docStoreConn.delete({"doc_id": doc.id}, search.index_name(tenant_id), doc.kb_id)

|

||||

|

||||

try:

|

||||

if req.get("pipeline_id"):

|

||||

if doc.pipeline_id == req["pipeline_id"]:

|

||||

return get_json_result(data=True)

|

||||

DocumentService.update_by_id(doc.id, {"pipeline_id": req["pipeline_id"]})

|

||||

reset_doc()

|

||||

return get_json_result(data=True)

|

||||

|

||||

if doc.parser_id.lower() == req["parser_id"].lower():

|

||||

if "parser_config" in req:

|

||||

if req["parser_config"] == doc.parser_config:

|

||||

return get_json_result(data=True)

|

||||

else:

|

||||

return get_json_result(data=True)

|

||||

|

||||

if (doc.type == FileType.VISUAL and req["parser_id"] != "picture") or (re.search(r"\.(ppt|pptx|pages)$", doc.name) and req["parser_id"] != "presentation"):

|

||||

return get_data_error_result(message="Not supported yet!")

|

||||

if "parser_config" in req:

|

||||

DocumentService.update_parser_config(doc.id, req["parser_config"])

|

||||

reset_doc()

|

||||

return get_json_result(data=True)

|

||||

except Exception as e:

|

||||

return server_error_response(e)

|

||||

|

||||

@ -14,18 +14,21 @@

|

||||

# limitations under the License.

|

||||

#

|

||||

import json

|

||||

import logging

|

||||

|

||||

from flask import request

|

||||

from flask_login import login_required, current_user

|

||||

|

||||

from api.db.services import duplicate_name

|

||||

from api.db.services.document_service import DocumentService

|

||||

from api.db.services.document_service import DocumentService, queue_raptor_o_graphrag_tasks

|

||||

from api.db.services.file2document_service import File2DocumentService

|

||||

from api.db.services.file_service import FileService

|

||||

from api.db.services.pipeline_operation_log_service import PipelineOperationLogService

|

||||

from api.db.services.task_service import TaskService, GRAPH_RAPTOR_FAKE_DOC_ID

|

||||

from api.db.services.user_service import TenantService, UserTenantService

|

||||

from api.utils.api_utils import server_error_response, get_data_error_result, validate_request, not_allowed_parameters

|

||||

from api.utils.api_utils import get_error_data_result, server_error_response, get_data_error_result, validate_request, not_allowed_parameters

|

||||

from api.utils import get_uuid

|

||||

from api.db import StatusEnum, FileSource

|

||||

from api.db import StatusEnum, FileSource, VALID_FILE_TYPES

|

||||

from api.db.services.knowledgebase_service import KnowledgebaseService

|

||||

from api.db.db_models import File

|

||||

from api.utils.api_utils import get_json_result

|

||||

@ -35,7 +38,6 @@ from api.constants import DATASET_NAME_LIMIT

|

||||

from rag.settings import PAGERANK_FLD

|

||||

from rag.utils.storage_factory import STORAGE_IMPL

|

||||

|

||||

|

||||

@manager.route('/create', methods=['post']) # noqa: F821

|

||||

@login_required

|

||||

@validate_request("name")

|

||||

@ -61,10 +63,12 @@ def create():

|

||||

req["name"] = dataset_name

|

||||

req["tenant_id"] = current_user.id

|

||||

req["created_by"] = current_user.id

|

||||

if not req.get("parser_id"):

|

||||

req["parser_id"] = "naive"

|

||||

e, t = TenantService.get_by_id(current_user.id)

|

||||

if not e:

|

||||

return get_data_error_result(message="Tenant not found.")

|

||||

req["embd_id"] = t.embd_id

|

||||

#req["embd_id"] = t.embd_id

|

||||

if not KnowledgebaseService.save(**req):

|

||||

return get_data_error_result()

|

||||

return get_json_result(data={"kb_id": req["id"]})

|

||||

@ -379,3 +383,333 @@ def get_meta():

|

||||

code=settings.RetCode.AUTHENTICATION_ERROR

|

||||

)

|

||||

return get_json_result(data=DocumentService.get_meta_by_kbs(kb_ids))

|

||||

|

||||

|

||||

@manager.route("/basic_info", methods=["GET"]) # noqa: F821

|

||||

@login_required

|

||||

def get_basic_info():

|

||||

kb_id = request.args.get("kb_id", "")

|

||||

if not KnowledgebaseService.accessible(kb_id, current_user.id):

|

||||

return get_json_result(

|

||||

data=False,

|

||||

message='No authorization.',

|

||||

code=settings.RetCode.AUTHENTICATION_ERROR

|

||||

)

|

||||

|

||||

basic_info = DocumentService.knowledgebase_basic_info(kb_id)

|

||||

|

||||

return get_json_result(data=basic_info)

|

||||

|

||||

|

||||

@manager.route("/list_pipeline_logs", methods=["POST"]) # noqa: F821

|

||||

@login_required

|

||||

def list_pipeline_logs():

|

||||

kb_id = request.args.get("kb_id")

|

||||

if not kb_id:

|

||||

return get_json_result(data=False, message='Lack of "KB ID"', code=settings.RetCode.ARGUMENT_ERROR)

|

||||

|

||||

keywords = request.args.get("keywords", "")

|

||||

|

||||

page_number = int(request.args.get("page", 0))

|

||||

items_per_page = int(request.args.get("page_size", 0))

|

||||

orderby = request.args.get("orderby", "create_time")

|

||||

if request.args.get("desc", "true").lower() == "false":

|

||||

desc = False

|

||||

else:

|

||||

desc = True

|

||||

create_date_from = request.args.get("create_date_from", "")

|

||||

create_date_to = request.args.get("create_date_to", "")

|

||||

if create_date_to > create_date_from:

|

||||

return get_data_error_result(message="Create data filter is abnormal.")

|

||||

|

||||

req = request.get_json()

|

||||

|

||||

operation_status = req.get("operation_status", [])

|

||||

if operation_status:

|

||||

invalid_status = {s for s in operation_status if s not in ["success", "failed", "running", "pending"]}

|

||||

if invalid_status:

|

||||

return get_data_error_result(message=f"Invalid filter operation_status status conditions: {', '.join(invalid_status)}")

|

||||

|

||||

types = req.get("types", [])

|

||||

if types:

|

||||

invalid_types = {t for t in types if t not in VALID_FILE_TYPES}

|

||||

if invalid_types:

|

||||

return get_data_error_result(message=f"Invalid filter conditions: {', '.join(invalid_types)} type{'s' if len(invalid_types) > 1 else ''}")

|

||||

|

||||

suffix = req.get("suffix", [])

|

||||

|

||||

try:

|

||||

logs, tol = PipelineOperationLogService.get_file_logs_by_kb_id(kb_id, page_number, items_per_page, orderby, desc, keywords, operation_status, types, suffix, create_date_from, create_date_to)

|

||||

return get_json_result(data={"total": tol, "logs": logs})

|

||||

except Exception as e:

|

||||

return server_error_response(e)

|

||||

|

||||

|

||||

@manager.route("/list_pipeline_dataset_logs", methods=["POST"]) # noqa: F821

|

||||

@login_required

|

||||

def list_pipeline_dataset_logs():

|

||||

kb_id = request.args.get("kb_id")

|

||||

if not kb_id:

|

||||

return get_json_result(data=False, message='Lack of "KB ID"', code=settings.RetCode.ARGUMENT_ERROR)

|

||||

|

||||

page_number = int(request.args.get("page", 0))

|

||||

items_per_page = int(request.args.get("page_size", 0))

|

||||

orderby = request.args.get("orderby", "create_time")

|

||||

if request.args.get("desc", "true").lower() == "false":

|

||||

desc = False

|

||||

else:

|

||||

desc = True

|

||||

create_date_from = request.args.get("create_date_from", "")

|

||||

create_date_to = request.args.get("create_date_to", "")

|

||||

if create_date_to > create_date_from:

|

||||

return get_data_error_result(message="Create data filter is abnormal.")

|

||||

|

||||

req = request.get_json()

|

||||

|

||||

operation_status = req.get("operation_status", [])

|

||||

if operation_status:

|

||||

invalid_status = {s for s in operation_status if s not in ["success", "failed", "running", "pending"]}

|

||||

if invalid_status:

|

||||

return get_data_error_result(message=f"Invalid filter operation_status status conditions: {', '.join(invalid_status)}")

|

||||

|

||||

try:

|

||||

logs, tol = PipelineOperationLogService.get_dataset_logs_by_kb_id(kb_id, page_number, items_per_page, orderby, desc, operation_status, create_date_from, create_date_to)

|

||||

return get_json_result(data={"total": tol, "logs": logs})

|

||||

except Exception as e:

|

||||

return server_error_response(e)

|

||||

|

||||

|

||||

@manager.route("/delete_pipeline_logs", methods=["POST"]) # noqa: F821

|

||||

@login_required

|

||||

def delete_pipeline_logs():

|

||||

kb_id = request.args.get("kb_id")

|

||||

if not kb_id:

|

||||

return get_json_result(data=False, message='Lack of "KB ID"', code=settings.RetCode.ARGUMENT_ERROR)

|

||||

|

||||

req = request.get_json()

|

||||

log_ids = req.get("log_ids", [])

|

||||

|

||||

PipelineOperationLogService.delete_by_ids(log_ids)

|

||||

|

||||

return get_json_result(data=True)

|

||||

|

||||

|

||||

@manager.route("/pipeline_log_detail", methods=["GET"]) # noqa: F821

|

||||

@login_required

|

||||

def pipeline_log_detail():

|

||||

log_id = request.args.get("log_id")

|

||||

if not log_id:

|

||||

return get_json_result(data=False, message='Lack of "Pipeline log ID"', code=settings.RetCode.ARGUMENT_ERROR)

|

||||

|

||||

ok, log = PipelineOperationLogService.get_by_id(log_id)

|

||||

if not ok:

|

||||

return get_data_error_result(message="Invalid pipeline log ID")

|

||||

|

||||

return get_json_result(data=log.to_dict())

|

||||

|

||||

|

||||

@manager.route("/run_graphrag", methods=["POST"]) # noqa: F821

|

||||

@login_required

|

||||

def run_graphrag():

|

||||

req = request.json

|

||||

|

||||

kb_id = req.get("kb_id", "")

|

||||

if not kb_id:

|

||||

return get_error_data_result(message='Lack of "KB ID"')

|

||||

|

||||

ok, kb = KnowledgebaseService.get_by_id(kb_id)

|

||||

if not ok:

|

||||

return get_error_data_result(message="Invalid Knowledgebase ID")

|

||||

|

||||

task_id = kb.graphrag_task_id

|

||||

if task_id:

|

||||

ok, task = TaskService.get_by_id(task_id)

|

||||

if not ok:

|

||||

logging.warning(f"A valid GraphRAG task id is expected for kb {kb_id}")

|

||||

|

||||

if task and task.progress not in [-1, 1]:

|

||||

return get_error_data_result(message=f"Task {task_id} in progress with status {task.progress}. A Graph Task is already running.")

|

||||

|

||||

documents, _ = DocumentService.get_by_kb_id(

|

||||

kb_id=kb_id,

|

||||

page_number=0,

|

||||

items_per_page=0,

|

||||

orderby="create_time",

|

||||

desc=False,

|

||||

keywords="",

|

||||

run_status=[],

|

||||

types=[],

|

||||

suffix=[],

|

||||

)

|

||||

if not documents:

|

||||

return get_error_data_result(message=f"No documents in Knowledgebase {kb_id}")

|

||||

|

||||

sample_document = documents[0]

|

||||

document_ids = [document["id"] for document in documents]

|

||||

|

||||

task_id = queue_raptor_o_graphrag_tasks(doc=sample_document, ty="graphrag", priority=0, fake_doc_id=GRAPH_RAPTOR_FAKE_DOC_ID, doc_ids=list(document_ids))

|

||||

|

||||

if not KnowledgebaseService.update_by_id(kb.id, {"graphrag_task_id": task_id}):

|

||||

logging.warning(f"Cannot save graphrag_task_id for kb {kb_id}")

|

||||

|

||||

return get_json_result(data={"graphrag_task_id": task_id})

|

||||

|

||||

|

||||

@manager.route("/trace_graphrag", methods=["GET"]) # noqa: F821

|

||||

@login_required

|

||||

def trace_graphrag():

|

||||

kb_id = request.args.get("kb_id", "")

|

||||

if not kb_id:

|

||||

return get_error_data_result(message='Lack of "KB ID"')

|

||||

|

||||

ok, kb = KnowledgebaseService.get_by_id(kb_id)

|

||||

if not ok:

|

||||

return get_error_data_result(message="Invalid Knowledgebase ID")

|

||||

|

||||

task_id = kb.graphrag_task_id

|

||||

if not task_id:

|

||||

return get_json_result(data={})

|

||||

|

||||

ok, task = TaskService.get_by_id(task_id)

|

||||

if not ok:

|

||||

return get_error_data_result(message="GraphRAG Task Not Found or Error Occurred")

|

||||

|

||||

return get_json_result(data=task.to_dict())

|

||||

|

||||

|

||||

@manager.route("/run_raptor", methods=["POST"]) # noqa: F821

|

||||

@login_required

|

||||

def run_raptor():

|

||||

req = request.json

|

||||

|

||||

kb_id = req.get("kb_id", "")

|

||||

if not kb_id:

|

||||

return get_error_data_result(message='Lack of "KB ID"')

|

||||

|

||||

ok, kb = KnowledgebaseService.get_by_id(kb_id)

|

||||

if not ok:

|

||||

return get_error_data_result(message="Invalid Knowledgebase ID")

|

||||

|

||||

task_id = kb.raptor_task_id

|

||||

if task_id:

|

||||

ok, task = TaskService.get_by_id(task_id)

|

||||

if not ok:

|

||||

logging.warning(f"A valid RAPTOR task id is expected for kb {kb_id}")

|

||||

|

||||

if task and task.progress not in [-1, 1]:

|

||||

return get_error_data_result(message=f"Task {task_id} in progress with status {task.progress}. A RAPTOR Task is already running.")

|

||||

|

||||

documents, _ = DocumentService.get_by_kb_id(

|

||||

kb_id=kb_id,

|

||||

page_number=0,

|

||||

items_per_page=0,

|

||||

orderby="create_time",

|

||||

desc=False,

|

||||

keywords="",

|

||||

run_status=[],

|

||||

types=[],

|

||||

suffix=[],

|

||||

)

|

||||

if not documents:

|

||||

return get_error_data_result(message=f"No documents in Knowledgebase {kb_id}")

|

||||

|

||||

sample_document = documents[0]

|

||||

document_ids = [document["id"] for document in documents]

|

||||

|

||||

task_id = queue_raptor_o_graphrag_tasks(doc=sample_document, ty="raptor", priority=0, fake_doc_id=GRAPH_RAPTOR_FAKE_DOC_ID, doc_ids=list(document_ids))

|

||||

|

||||

if not KnowledgebaseService.update_by_id(kb.id, {"raptor_task_id": task_id}):

|

||||

logging.warning(f"Cannot save raptor_task_id for kb {kb_id}")

|

||||

|

||||

return get_json_result(data={"raptor_task_id": task_id})

|

||||

|

||||

|

||||

@manager.route("/trace_raptor", methods=["GET"]) # noqa: F821

|

||||

@login_required

|

||||

def trace_raptor():

|

||||

kb_id = request.args.get("kb_id", "")

|

||||

if not kb_id:

|

||||

return get_error_data_result(message='Lack of "KB ID"')

|

||||

|

||||

ok, kb = KnowledgebaseService.get_by_id(kb_id)

|

||||

if not ok:

|

||||

return get_error_data_result(message="Invalid Knowledgebase ID")

|

||||

|

||||

task_id = kb.raptor_task_id

|

||||

if not task_id:

|

||||

return get_json_result(data={})

|

||||

|

||||

ok, task = TaskService.get_by_id(task_id)

|

||||

if not ok:

|

||||

return get_error_data_result(message="RAPTOR Task Not Found or Error Occurred")

|

||||

|

||||

return get_json_result(data=task.to_dict())

|

||||

|

||||

|

||||

@manager.route("/run_mindmap", methods=["POST"]) # noqa: F821

|

||||

@login_required

|

||||

def run_mindmap():

|

||||

req = request.json

|

||||

|

||||

kb_id = req.get("kb_id", "")

|

||||

if not kb_id:

|

||||

return get_error_data_result(message='Lack of "KB ID"')

|

||||

|

||||

ok, kb = KnowledgebaseService.get_by_id(kb_id)

|

||||

if not ok:

|

||||

return get_error_data_result(message="Invalid Knowledgebase ID")

|

||||

|

||||

task_id = kb.mindmap_task_id

|

||||

if task_id:

|

||||

ok, task = TaskService.get_by_id(task_id)

|

||||

if not ok:

|

||||

logging.warning(f"A valid Mindmap task id is expected for kb {kb_id}")

|

||||

|

||||

if task and task.progress not in [-1, 1]:

|

||||

return get_error_data_result(message=f"Task {task_id} in progress with status {task.progress}. A Mindmap Task is already running.")

|

||||

|

||||

documents, _ = DocumentService.get_by_kb_id(

|

||||

kb_id=kb_id,

|

||||

page_number=0,

|

||||

items_per_page=0,

|

||||

orderby="create_time",

|

||||

desc=False,

|

||||

keywords="",

|

||||

run_status=[],

|

||||

types=[],

|

||||

suffix=[],

|

||||

)

|

||||

if not documents:

|

||||

return get_error_data_result(message=f"No documents in Knowledgebase {kb_id}")

|

||||

|

||||

sample_document = documents[0]

|

||||

document_ids = [document["id"] for document in documents]

|

||||

|

||||

task_id = queue_raptor_o_graphrag_tasks(doc=sample_document, ty="mindmap", priority=0, fake_doc_id=GRAPH_RAPTOR_FAKE_DOC_ID, doc_ids=list(document_ids))

|

||||

|

||||

if not KnowledgebaseService.update_by_id(kb.id, {"mindmap_task_id": task_id}):

|

||||

logging.warning(f"Cannot save mindmap_task_id for kb {kb_id}")

|

||||

|

||||

return get_json_result(data={"mindmap_task_id": task_id})

|

||||

|

||||

|

||||

@manager.route("/trace_mindmap", methods=["GET"]) # noqa: F821

|

||||

@login_required

|

||||

def trace_mindmap():

|

||||

kb_id = request.args.get("kb_id", "")

|

||||

if not kb_id:

|

||||

return get_error_data_result(message='Lack of "KB ID"')

|

||||

|

||||

ok, kb = KnowledgebaseService.get_by_id(kb_id)

|

||||

if not ok:

|

||||

return get_error_data_result(message="Invalid Knowledgebase ID")

|

||||

|

||||

task_id = kb.mindmap_task_id

|

||||

if not task_id:

|

||||

return get_json_result(data={})

|

||||

|

||||

ok, task = TaskService.get_by_id(task_id)

|

||||

if not ok:

|

||||

return get_error_data_result(message="Mindmap Task Not Found or Error Occurred")

|

||||

|

||||

return get_json_result(data=task.to_dict())

|

||||

|

||||

@ -414,7 +414,7 @@ def agents_completion_openai_compatibility(tenant_id, agent_id):

|

||||

tenant_id,

|

||||

agent_id,

|

||||

question,

|

||||

session_id=req.get("session_id", req.get("id", "") or req.get("metadata", {}).get("id", "")),

|

||||

session_id=req.pop("session_id", req.get("id", "")) or req.get("metadata", {}).get("id", ""),

|

||||

stream=True,

|

||||

**req,

|

||||

),

|

||||

@ -432,7 +432,7 @@ def agents_completion_openai_compatibility(tenant_id, agent_id):

|

||||

tenant_id,

|

||||

agent_id,

|

||||

question,

|

||||

session_id=req.get("session_id", req.get("id", "") or req.get("metadata", {}).get("id", "")),

|

||||

session_id=req.pop("session_id", req.get("id", "")) or req.get("metadata", {}).get("id", ""),

|

||||

stream=False,

|

||||

**req,

|

||||

)

|

||||

|

||||

@ -36,6 +36,8 @@ from rag.utils.storage_factory import STORAGE_IMPL, STORAGE_IMPL_TYPE

|

||||

from timeit import default_timer as timer

|

||||

|

||||

from rag.utils.redis_conn import REDIS_CONN

|

||||

from flask import jsonify

|

||||

from api.utils.health import run_health_checks

|

||||

|

||||

@manager.route("/version", methods=["GET"]) # noqa: F821

|

||||

@login_required

|

||||

@ -169,6 +171,12 @@ def status():

|

||||

return get_json_result(data=res)

|

||||

|

||||

|

||||

@manager.route("/healthz", methods=["GET"]) # noqa: F821

|

||||

def healthz():

|

||||

result, all_ok = run_health_checks()

|

||||

return jsonify(result), (200 if all_ok else 500)

|

||||

|

||||

|

||||

@manager.route("/new_token", methods=["POST"]) # noqa: F821

|

||||

@login_required

|

||||

def new_token():

|

||||

|

||||

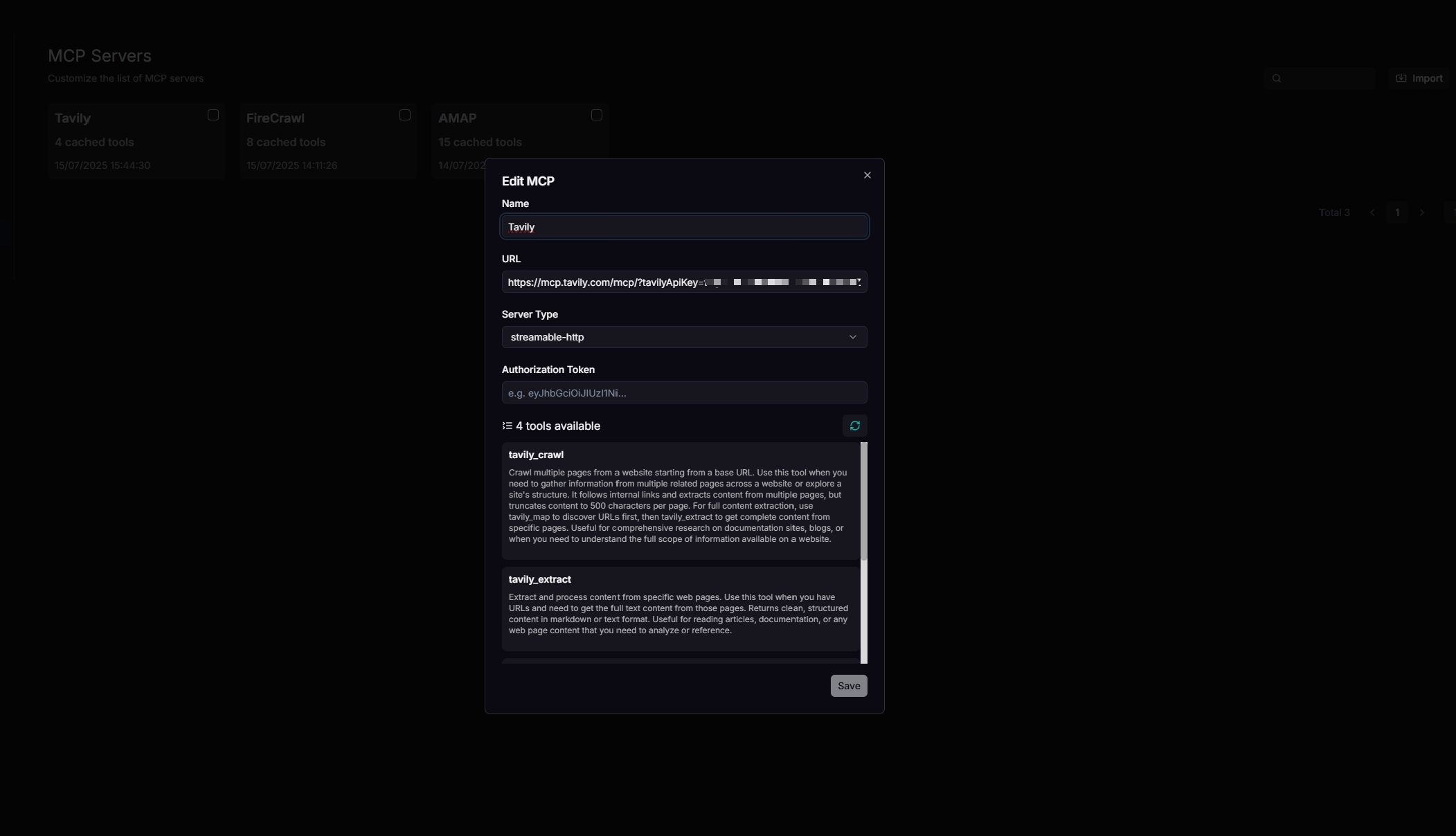

@ -122,4 +122,15 @@ class MCPServerType(StrEnum):

|

||||

VALID_MCP_SERVER_TYPES = {MCPServerType.SSE, MCPServerType.STREAMABLE_HTTP}

|

||||

|

||||

|

||||

class PipelineTaskType(StrEnum):

|

||||

PARSE = "Parse"

|

||||

DOWNLOAD = "Download"

|

||||

RAPTOR = "RAPTOR"

|

||||

GRAPH_RAG = "GraphRAG"

|

||||

MINDMAP = "Mindmap"

|

||||

|

||||

|

||||

VALID_PIPELINE_TASK_TYPES = {PipelineTaskType.PARSE, PipelineTaskType.DOWNLOAD, PipelineTaskType.RAPTOR, PipelineTaskType.GRAPH_RAG, PipelineTaskType.MINDMAP}

|

||||

|

||||

|

||||

KNOWLEDGEBASE_FOLDER_NAME=".knowledgebase"

|

||||

|

||||

@ -646,8 +646,17 @@ class Knowledgebase(DataBaseModel):

|

||||

vector_similarity_weight = FloatField(default=0.3, index=True)

|

||||

|

||||

parser_id = CharField(max_length=32, null=False, help_text="default parser ID", default=ParserType.NAIVE.value, index=True)

|

||||

pipeline_id = CharField(max_length=32, null=True, help_text="Pipeline ID", index=True)

|

||||

parser_config = JSONField(null=False, default={"pages": [[1, 1000000]]})

|

||||

pagerank = IntegerField(default=0, index=False)

|

||||

|

||||