mirror of

https://github.com/infiniflow/ragflow.git

synced 2026-02-04 01:25:07 +08:00

Compare commits

42 Commits

pipeline

...

902703d145

| Author | SHA1 | Date | |

|---|---|---|---|

| 902703d145 | |||

| 7ccca2143c | |||

| 70ce02faf4 | |||

| 3f1741c8c6 | |||

| 6c24ad7966 | |||

| 4846589599 | |||

| a24547aa66 | |||

| a04c5247ab | |||

| ed6a76dcc0 | |||

| a0ccbec8bd | |||

| 4693c5382a | |||

| ff3b4d0dcd | |||

| 62d35b1b73 | |||

| 91b609447d | |||

| c353840244 | |||

| f12b9fdcd4 | |||

| 80ede65bbe | |||

| 52cf186028 | |||

| ea0f1d47a5 | |||

| 9fe7c92217 | |||

| d353f7f7f8 | |||

| f3738b06f1 | |||

| 5a8bc88147 | |||

| 04ef5b2783 | |||

| c9ea22ef69 | |||

| 152111fd9d | |||

| 86f6da2f74 | |||

| 8c00cbc87a | |||

| 41e808f4e6 | |||

| bc0281040b | |||

| 341a7b1473 | |||

| c29c395390 | |||

| a23a0f230c | |||

| 2a88ce6be1 | |||

| 664b781d62 | |||

| 65571e5254 | |||

| aa30f20730 | |||

| b9b278d441 | |||

| e1d86cfee3 | |||

| 8ebd07337f | |||

| dd584d57b0 | |||

| 3d39b96c6f |

8

.github/workflows/release.yml

vendored

8

.github/workflows/release.yml

vendored

@ -88,7 +88,9 @@ jobs:

|

||||

with:

|

||||

context: .

|

||||

push: true

|

||||

tags: infiniflow/ragflow:${{ env.RELEASE_TAG }}

|

||||

tags: |

|

||||

infiniflow/ragflow:${{ env.RELEASE_TAG }}

|

||||

infiniflow/ragflow:latest-full

|

||||

file: Dockerfile

|

||||

platforms: linux/amd64

|

||||

|

||||

@ -98,7 +100,9 @@ jobs:

|

||||

with:

|

||||

context: .

|

||||

push: true

|

||||

tags: infiniflow/ragflow:${{ env.RELEASE_TAG }}-slim

|

||||

tags: |

|

||||

infiniflow/ragflow:${{ env.RELEASE_TAG }}-slim

|

||||

infiniflow/ragflow:latest-slim

|

||||

file: Dockerfile

|

||||

build-args: LIGHTEN=1

|

||||

platforms: linux/amd64

|

||||

|

||||

@ -83,7 +83,7 @@

|

||||

},

|

||||

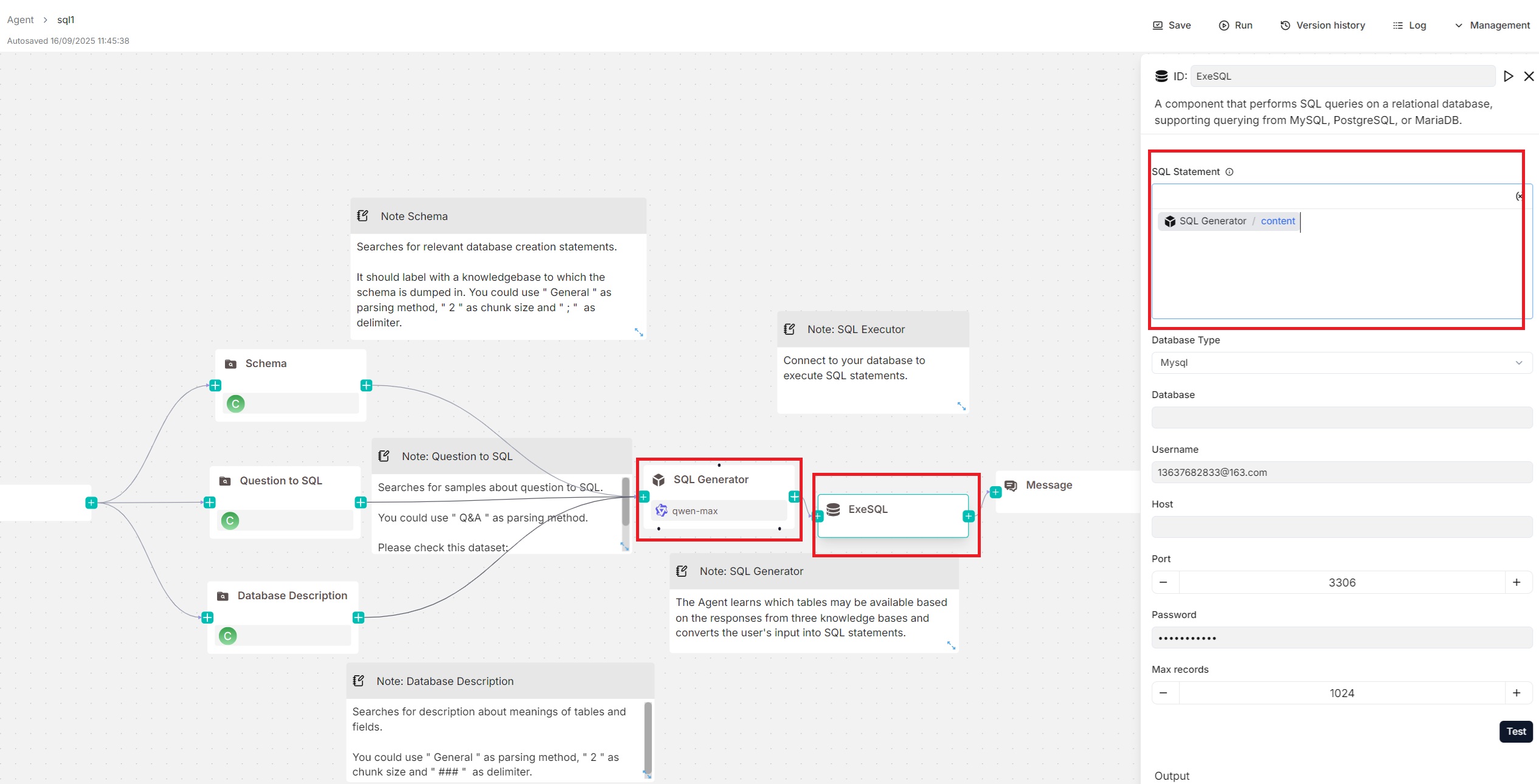

"password": "20010812Yy!",

|

||||

"port": 3306,

|

||||

"sql": "Agent:WickedGoatsDivide@content",

|

||||

"sql": "{Agent:WickedGoatsDivide@content}",

|

||||

"username": "13637682833@163.com"

|

||||

}

|

||||

},

|

||||

@ -114,9 +114,7 @@

|

||||

"params": {

|

||||

"cross_languages": [],

|

||||

"empty_response": "",

|

||||

"kb_ids": [

|

||||

"ed31364c727211f0bdb2bafe6e7908e6"

|

||||

],

|

||||

"kb_ids": [],

|

||||

"keywords_similarity_weight": 0.7,

|

||||

"outputs": {

|

||||

"formalized_content": {

|

||||

@ -124,7 +122,7 @@

|

||||

"value": ""

|

||||

}

|

||||

},

|

||||

"query": "sys.query",

|

||||

"query": "{sys.query}",

|

||||

"rerank_id": "",

|

||||

"similarity_threshold": 0.2,

|

||||

"top_k": 1024,

|

||||

@ -145,9 +143,7 @@

|

||||

"params": {

|

||||

"cross_languages": [],

|

||||

"empty_response": "",

|

||||

"kb_ids": [

|

||||

"0f968106727311f08357bafe6e7908e6"

|

||||

],

|

||||

"kb_ids": [],

|

||||

"keywords_similarity_weight": 0.7,

|

||||

"outputs": {

|

||||

"formalized_content": {

|

||||

@ -155,7 +151,7 @@

|

||||

"value": ""

|

||||

}

|

||||

},

|

||||

"query": "sys.query",

|

||||

"query": "{sys.query}",

|

||||

"rerank_id": "",

|

||||

"similarity_threshold": 0.2,

|

||||

"top_k": 1024,

|

||||

@ -176,9 +172,7 @@

|

||||

"params": {

|

||||

"cross_languages": [],

|

||||

"empty_response": "",

|

||||

"kb_ids": [

|

||||

"4ad1f9d0727311f0827dbafe6e7908e6"

|

||||

],

|

||||

"kb_ids": [],

|

||||

"keywords_similarity_weight": 0.7,

|

||||

"outputs": {

|

||||

"formalized_content": {

|

||||

@ -186,7 +180,7 @@

|

||||

"value": ""

|

||||

}

|

||||

},

|

||||

"query": "sys.query",

|

||||

"query": "{sys.query}",

|

||||

"rerank_id": "",

|

||||

"similarity_threshold": 0.2,

|

||||

"top_k": 1024,

|

||||

@ -347,9 +341,7 @@

|

||||

"form": {

|

||||

"cross_languages": [],

|

||||

"empty_response": "",

|

||||

"kb_ids": [

|

||||

"ed31364c727211f0bdb2bafe6e7908e6"

|

||||

],

|

||||

"kb_ids": [],

|

||||

"keywords_similarity_weight": 0.7,

|

||||

"outputs": {

|

||||

"formalized_content": {

|

||||

@ -357,7 +349,7 @@

|

||||

"value": ""

|

||||

}

|

||||

},

|

||||

"query": "sys.query",

|

||||

"query": "{sys.query}",

|

||||

"rerank_id": "",

|

||||

"similarity_threshold": 0.2,

|

||||

"top_k": 1024,

|

||||

@ -387,9 +379,7 @@

|

||||

"form": {

|

||||

"cross_languages": [],

|

||||

"empty_response": "",

|

||||

"kb_ids": [

|

||||

"0f968106727311f08357bafe6e7908e6"

|

||||

],

|

||||

"kb_ids": [],

|

||||

"keywords_similarity_weight": 0.7,

|

||||

"outputs": {

|

||||

"formalized_content": {

|

||||

@ -397,7 +387,7 @@

|

||||

"value": ""

|

||||

}

|

||||

},

|

||||

"query": "sys.query",

|

||||

"query": "{sys.query}",

|

||||

"rerank_id": "",

|

||||

"similarity_threshold": 0.2,

|

||||

"top_k": 1024,

|

||||

@ -427,9 +417,7 @@

|

||||

"form": {

|

||||

"cross_languages": [],

|

||||

"empty_response": "",

|

||||

"kb_ids": [

|

||||

"4ad1f9d0727311f0827dbafe6e7908e6"

|

||||

],

|

||||

"kb_ids": [],

|

||||

"keywords_similarity_weight": 0.7,

|

||||

"outputs": {

|

||||

"formalized_content": {

|

||||

@ -437,7 +425,7 @@

|

||||

"value": ""

|

||||

}

|

||||

},

|

||||

"query": "sys.query",

|

||||

"query": "{sys.query}",

|

||||

"rerank_id": "",

|

||||

"similarity_threshold": 0.2,

|

||||

"top_k": 1024,

|

||||

@ -539,7 +527,7 @@

|

||||

},

|

||||

"password": "20010812Yy!",

|

||||

"port": 3306,

|

||||

"sql": "Agent:WickedGoatsDivide@content",

|

||||

"sql": "{Agent:WickedGoatsDivide@content}",

|

||||

"username": "13637682833@163.com"

|

||||

},

|

||||

"label": "ExeSQL",

|

||||

|

||||

@ -157,7 +157,7 @@ class CodeExec(ToolBase, ABC):

|

||||

|

||||

try:

|

||||

resp = requests.post(url=f"http://{settings.SANDBOX_HOST}:9385/run", json=code_req, timeout=os.environ.get("COMPONENT_EXEC_TIMEOUT", 10*60))

|

||||

logging.info(f"http://{settings.SANDBOX_HOST}:9385/run", code_req, resp.status_code)

|

||||

logging.info(f"http://{settings.SANDBOX_HOST}:9385/run, code_req: {code_req}, resp.status_code {resp.status_code}:")

|

||||

if resp.status_code != 200:

|

||||

resp.raise_for_status()

|

||||

body = resp.json()

|

||||

|

||||

@ -53,7 +53,7 @@ class ExeSQLParam(ToolParamBase):

|

||||

self.max_records = 1024

|

||||

|

||||

def check(self):

|

||||

self.check_valid_value(self.db_type, "Choose DB type", ['mysql', 'postgresql', 'mariadb', 'mssql'])

|

||||

self.check_valid_value(self.db_type, "Choose DB type", ['mysql', 'postgres', 'mariadb', 'mssql'])

|

||||

self.check_empty(self.database, "Database name")

|

||||

self.check_empty(self.username, "database username")

|

||||

self.check_empty(self.host, "IP Address")

|

||||

@ -111,7 +111,7 @@ class ExeSQL(ToolBase, ABC):

|

||||

if self._param.db_type in ["mysql", "mariadb"]:

|

||||

db = pymysql.connect(db=self._param.database, user=self._param.username, host=self._param.host,

|

||||

port=self._param.port, password=self._param.password)

|

||||

elif self._param.db_type == 'postgresql':

|

||||

elif self._param.db_type == 'postgres':

|

||||

db = psycopg2.connect(dbname=self._param.database, user=self._param.username, host=self._param.host,

|

||||

port=self._param.port, password=self._param.password)

|

||||

elif self._param.db_type == 'mssql':

|

||||

|

||||

@ -332,7 +332,7 @@ def test_db_connect():

|

||||

if req["db_type"] in ["mysql", "mariadb"]:

|

||||

db = MySQLDatabase(req["database"], user=req["username"], host=req["host"], port=req["port"],

|

||||

password=req["password"])

|

||||

elif req["db_type"] == 'postgresql':

|

||||

elif req["db_type"] == 'postgres':

|

||||

db = PostgresqlDatabase(req["database"], user=req["username"], host=req["host"], port=req["port"],

|

||||

password=req["password"])

|

||||

elif req["db_type"] == 'mssql':

|

||||

|

||||

@ -379,3 +379,19 @@ def get_meta():

|

||||

code=settings.RetCode.AUTHENTICATION_ERROR

|

||||

)

|

||||

return get_json_result(data=DocumentService.get_meta_by_kbs(kb_ids))

|

||||

|

||||

|

||||

@manager.route("/basic_info", methods=["GET"]) # noqa: F821

|

||||

@login_required

|

||||

def get_basic_info():

|

||||

kb_id = request.args.get("kb_id", "")

|

||||

if not KnowledgebaseService.accessible(kb_id, current_user.id):

|

||||

return get_json_result(

|

||||

data=False,

|

||||

message='No authorization.',

|

||||

code=settings.RetCode.AUTHENTICATION_ERROR

|

||||

)

|

||||

|

||||

basic_info = DocumentService.knowledgebase_basic_info(kb_id)

|

||||

|

||||

return get_json_result(data=basic_info)

|

||||

|

||||

@ -3,9 +3,11 @@ import re

|

||||

|

||||

import flask

|

||||

from flask import request

|

||||

from pathlib import Path

|

||||

|

||||

from api.db.services.document_service import DocumentService

|

||||

from api.db.services.file2document_service import File2DocumentService

|

||||

from api.db.services.knowledgebase_service import KnowledgebaseService

|

||||

from api.utils.api_utils import server_error_response, token_required

|

||||

from api.utils import get_uuid

|

||||

from api.db import FileType

|

||||

@ -666,3 +668,71 @@ def move(tenant_id):

|

||||

return get_json_result(data=True)

|

||||

except Exception as e:

|

||||

return server_error_response(e)

|

||||

|

||||

@manager.route('/file/convert', methods=['POST']) # noqa: F821

|

||||

@token_required

|

||||

def convert(tenant_id):

|

||||

req = request.json

|

||||

kb_ids = req["kb_ids"]

|

||||

file_ids = req["file_ids"]

|

||||

file2documents = []

|

||||

|

||||

try:

|

||||

files = FileService.get_by_ids(file_ids)

|

||||

files_set = dict({file.id: file for file in files})

|

||||

for file_id in file_ids:

|

||||

file = files_set[file_id]

|

||||

if not file:

|

||||

return get_json_result(message="File not found!", code=404)

|

||||

file_ids_list = [file_id]

|

||||

if file.type == FileType.FOLDER.value:

|

||||

file_ids_list = FileService.get_all_innermost_file_ids(file_id, [])

|

||||

for id in file_ids_list:

|

||||

informs = File2DocumentService.get_by_file_id(id)

|

||||

# delete

|

||||

for inform in informs:

|

||||

doc_id = inform.document_id

|

||||

e, doc = DocumentService.get_by_id(doc_id)

|

||||

if not e:

|

||||

return get_json_result(message="Document not found!", code=404)

|

||||

tenant_id = DocumentService.get_tenant_id(doc_id)

|

||||

if not tenant_id:

|

||||

return get_json_result(message="Tenant not found!", code=404)

|

||||

if not DocumentService.remove_document(doc, tenant_id):

|

||||

return get_json_result(

|

||||

message="Database error (Document removal)!", code=404)

|

||||

File2DocumentService.delete_by_file_id(id)

|

||||

|

||||

# insert

|

||||

for kb_id in kb_ids:

|

||||

e, kb = KnowledgebaseService.get_by_id(kb_id)

|

||||

if not e:

|

||||

return get_json_result(

|

||||

message="Can't find this knowledgebase!", code=404)

|

||||

e, file = FileService.get_by_id(id)

|

||||

if not e:

|

||||

return get_json_result(

|

||||

message="Can't find this file!", code=404)

|

||||

|

||||

doc = DocumentService.insert({

|

||||

"id": get_uuid(),

|

||||

"kb_id": kb.id,

|

||||

"parser_id": FileService.get_parser(file.type, file.name, kb.parser_id),

|

||||

"parser_config": kb.parser_config,

|

||||

"created_by": tenant_id,

|

||||

"type": file.type,

|

||||

"name": file.name,

|

||||

"suffix": Path(file.name).suffix.lstrip("."),

|

||||

"location": file.location,

|

||||

"size": file.size

|

||||

})

|

||||

file2document = File2DocumentService.insert({

|

||||

"id": get_uuid(),

|

||||

"file_id": id,

|

||||

"document_id": doc.id,

|

||||

})

|

||||

|

||||

file2documents.append(file2document.to_json())

|

||||

return get_json_result(data=file2documents)

|

||||

except Exception as e:

|

||||

return server_error_response(e)

|

||||

@ -414,7 +414,7 @@ def agents_completion_openai_compatibility(tenant_id, agent_id):

|

||||

tenant_id,

|

||||

agent_id,

|

||||

question,

|

||||

session_id=req.get("session_id", req.get("id", "") or req.get("metadata", {}).get("id", "")),

|

||||

session_id=req.pop("session_id", req.get("id", "")) or req.get("metadata", {}).get("id", ""),

|

||||

stream=True,

|

||||

**req,

|

||||

),

|

||||

@ -432,7 +432,7 @@ def agents_completion_openai_compatibility(tenant_id, agent_id):

|

||||

tenant_id,

|

||||

agent_id,

|

||||

question,

|

||||

session_id=req.get("session_id", req.get("id", "") or req.get("metadata", {}).get("id", "")),

|

||||

session_id=req.pop("session_id", req.get("id", "")) or req.get("metadata", {}).get("id", ""),

|

||||

stream=False,

|

||||

**req,

|

||||

)

|

||||

|

||||

@ -36,6 +36,8 @@ from rag.utils.storage_factory import STORAGE_IMPL, STORAGE_IMPL_TYPE

|

||||

from timeit import default_timer as timer

|

||||

|

||||

from rag.utils.redis_conn import REDIS_CONN

|

||||

from flask import jsonify

|

||||

from api.utils.health_utils import run_health_checks

|

||||

|

||||

@manager.route("/version", methods=["GET"]) # noqa: F821

|

||||

@login_required

|

||||

@ -169,6 +171,12 @@ def status():

|

||||

return get_json_result(data=res)

|

||||

|

||||

|

||||

@manager.route("/healthz", methods=["GET"]) # noqa: F821

|

||||

def healthz():

|

||||

result, all_ok = run_health_checks()

|

||||

return jsonify(result), (200 if all_ok else 500)

|

||||

|

||||

|

||||

@manager.route("/new_token", methods=["POST"]) # noqa: F821

|

||||

@login_required

|

||||

def new_token():

|

||||

|

||||

@ -144,8 +144,9 @@ def init_llm_factory():

|

||||

except Exception:

|

||||

pass

|

||||

break

|

||||

doc_count = DocumentService.get_all_kb_doc_count()

|

||||

for kb_id in KnowledgebaseService.get_all_ids():

|

||||

KnowledgebaseService.update_document_number_in_init(kb_id=kb_id, doc_num=DocumentService.get_kb_doc_count(kb_id))

|

||||

KnowledgebaseService.update_document_number_in_init(kb_id=kb_id, doc_num=doc_count.get(kb_id, 0))

|

||||

|

||||

|

||||

|

||||

|

||||

@ -24,7 +24,7 @@ from io import BytesIO

|

||||

|

||||

import trio

|

||||

import xxhash

|

||||

from peewee import fn

|

||||

from peewee import fn, Case

|

||||

|

||||

from api import settings

|

||||

from api.constants import IMG_BASE64_PREFIX, FILE_NAME_LEN_LIMIT

|

||||

@ -660,8 +660,16 @@ class DocumentService(CommonService):

|

||||

@classmethod

|

||||

@DB.connection_context()

|

||||

def get_kb_doc_count(cls, kb_id):

|

||||

return len(cls.model.select(cls.model.id).where(

|

||||

cls.model.kb_id == kb_id).dicts())

|

||||

return cls.model.select().where(cls.model.kb_id == kb_id).count()

|

||||

|

||||

@classmethod

|

||||

@DB.connection_context()

|

||||

def get_all_kb_doc_count(cls):

|

||||

result = {}

|

||||

rows = cls.model.select(cls.model.kb_id, fn.COUNT(cls.model.id).alias('count')).group_by(cls.model.kb_id)

|

||||

for row in rows:

|

||||

result[row.kb_id] = row.count

|

||||

return result

|

||||

|

||||

@classmethod

|

||||

@DB.connection_context()

|

||||

@ -674,6 +682,53 @@ class DocumentService(CommonService):

|

||||

return False

|

||||

|

||||

|

||||

@classmethod

|

||||

@DB.connection_context()

|

||||

def knowledgebase_basic_info(cls, kb_id: str) -> dict[str, int]:

|

||||

# cancelled: run == "2" but progress can vary

|

||||

cancelled = (

|

||||

cls.model.select(fn.COUNT(1))

|

||||

.where((cls.model.kb_id == kb_id) & (cls.model.run == TaskStatus.CANCEL))

|

||||

.scalar()

|

||||

)

|

||||

|

||||

row = (

|

||||

cls.model.select(

|

||||

# finished: progress == 1

|

||||

fn.COALESCE(fn.SUM(Case(None, [(cls.model.progress == 1, 1)], 0)), 0).alias("finished"),

|

||||

|

||||

# failed: progress == -1

|

||||

fn.COALESCE(fn.SUM(Case(None, [(cls.model.progress == -1, 1)], 0)), 0).alias("failed"),

|

||||

|

||||

# processing: 0 <= progress < 1

|

||||

fn.COALESCE(

|

||||

fn.SUM(

|

||||

Case(

|

||||

None,

|

||||

[

|

||||

(((cls.model.progress == 0) | ((cls.model.progress > 0) & (cls.model.progress < 1))), 1),

|

||||

],

|

||||

0,

|

||||

)

|

||||

),

|

||||

0,

|

||||

).alias("processing"),

|

||||

)

|

||||

.where(

|

||||

(cls.model.kb_id == kb_id)

|

||||

& ((cls.model.run.is_null(True)) | (cls.model.run != TaskStatus.CANCEL))

|

||||

)

|

||||

.dicts()

|

||||

.get()

|

||||

)

|

||||

|

||||

return {

|

||||

"processing": int(row["processing"]),

|

||||

"finished": int(row["finished"]),

|

||||

"failed": int(row["failed"]),

|

||||

"cancelled": int(cancelled),

|

||||

}

|

||||

|

||||

def queue_raptor_o_graphrag_tasks(doc, ty, priority):

|

||||

chunking_config = DocumentService.get_chunking_config(doc["id"])

|

||||

hasher = xxhash.xxh64()

|

||||

@ -702,6 +757,8 @@ def queue_raptor_o_graphrag_tasks(doc, ty, priority):

|

||||

|

||||

def get_queue_length(priority):

|

||||

group_info = REDIS_CONN.queue_info(get_svr_queue_name(priority), SVR_CONSUMER_GROUP_NAME)

|

||||

if not group_info:

|

||||

return 0

|

||||

return int(group_info.get("lag", 0) or 0)

|

||||

|

||||

|

||||

@ -847,3 +904,4 @@ def doc_upload_and_parse(conversation_id, file_objs, user_id):

|

||||

doc_id, kb.id, token_counts[doc_id], chunk_counts[doc_id], 0)

|

||||

|

||||

return [d["id"] for d, _ in files]

|

||||

|

||||

|

||||

90

api/utils/health_utils.py

Normal file

90

api/utils/health_utils.py

Normal file

@ -0,0 +1,90 @@

|

||||

from timeit import default_timer as timer

|

||||

|

||||

from api import settings

|

||||

from api.db.db_models import DB

|

||||

from rag.utils.redis_conn import REDIS_CONN

|

||||

from rag.utils.storage_factory import STORAGE_IMPL

|

||||

|

||||

|

||||

def _ok_nok(ok: bool) -> str:

|

||||

return "ok" if ok else "nok"

|

||||

|

||||

|

||||

def check_db() -> tuple[bool, dict]:

|

||||

st = timer()

|

||||

try:

|

||||

# lightweight probe; works for MySQL/Postgres

|

||||

DB.execute_sql("SELECT 1")

|

||||

return True, {"elapsed": f"{(timer() - st) * 1000.0:.1f}"}

|

||||

except Exception as e:

|

||||

return False, {"elapsed": f"{(timer() - st) * 1000.0:.1f}", "error": str(e)}

|

||||

|

||||

|

||||

def check_redis() -> tuple[bool, dict]:

|

||||

st = timer()

|

||||

try:

|

||||

ok = bool(REDIS_CONN.health())

|

||||

return ok, {"elapsed": f"{(timer() - st) * 1000.0:.1f}"}

|

||||

except Exception as e:

|

||||

return False, {"elapsed": f"{(timer() - st) * 1000.0:.1f}", "error": str(e)}

|

||||

|

||||

|

||||

def check_doc_engine() -> tuple[bool, dict]:

|

||||

st = timer()

|

||||

try:

|

||||

meta = settings.docStoreConn.health()

|

||||

# treat any successful call as ok

|

||||

return True, {"elapsed": f"{(timer() - st) * 1000.0:.1f}", **(meta or {})}

|

||||

except Exception as e:

|

||||

return False, {"elapsed": f"{(timer() - st) * 1000.0:.1f}", "error": str(e)}

|

||||

|

||||

|

||||

def check_storage() -> tuple[bool, dict]:

|

||||

st = timer()

|

||||

try:

|

||||

STORAGE_IMPL.health()

|

||||

return True, {"elapsed": f"{(timer() - st) * 1000.0:.1f}"}

|

||||

except Exception as e:

|

||||

return False, {"elapsed": f"{(timer() - st) * 1000.0:.1f}", "error": str(e)}

|

||||

|

||||

|

||||

|

||||

|

||||

def run_health_checks() -> tuple[dict, bool]:

|

||||

result: dict[str, str | dict] = {}

|

||||

|

||||

db_ok, db_meta = check_db()

|

||||

result["db"] = _ok_nok(db_ok)

|

||||

if not db_ok:

|

||||

result.setdefault("_meta", {})["db"] = db_meta

|

||||

|

||||

try:

|

||||

redis_ok, redis_meta = check_redis()

|

||||

result["redis"] = _ok_nok(redis_ok)

|

||||

if not redis_ok:

|

||||

result.setdefault("_meta", {})["redis"] = redis_meta

|

||||

except Exception:

|

||||

result["redis"] = "nok"

|

||||

|

||||

try:

|

||||

doc_ok, doc_meta = check_doc_engine()

|

||||

result["doc_engine"] = _ok_nok(doc_ok)

|

||||

if not doc_ok:

|

||||

result.setdefault("_meta", {})["doc_engine"] = doc_meta

|

||||

except Exception:

|

||||

result["doc_engine"] = "nok"

|

||||

|

||||

try:

|

||||

sto_ok, sto_meta = check_storage()

|

||||

result["storage"] = _ok_nok(sto_ok)

|

||||

if not sto_ok:

|

||||

result.setdefault("_meta", {})["storage"] = sto_meta

|

||||

except Exception:

|

||||

result["storage"] = "nok"

|

||||

|

||||

|

||||

all_ok = (result.get("db") == "ok") and (result.get("redis") == "ok") and (result.get("doc_engine") == "ok") and (result.get("storage") == "ok")

|

||||

result["status"] = "ok" if all_ok else "nok"

|

||||

return result, all_ok

|

||||

|

||||

|

||||

@ -219,6 +219,70 @@

|

||||

}

|

||||

]

|

||||

},

|

||||

{

|

||||

"name": "TokenPony",

|

||||

"logo": "",

|

||||

"tags": "LLM",

|

||||

"status": "1",

|

||||

"llm": [

|

||||

{

|

||||

"llm_name": "qwen3-8b",

|

||||

"tags": "LLM,CHAT,131k",

|

||||

"max_tokens": 131000,

|

||||

"model_type": "chat",

|

||||

"is_tools": true

|

||||

},

|

||||

{

|

||||

"llm_name": "deepseek-v3-0324",

|

||||

"tags": "LLM,CHAT,128k",

|

||||

"max_tokens": 128000,

|

||||

"model_type": "chat",

|

||||

"is_tools": true

|

||||

},

|

||||

{

|

||||

"llm_name": "qwen3-32b",

|

||||

"tags": "LLM,CHAT,131k",

|

||||

"max_tokens": 131000,

|

||||

"model_type": "chat",

|

||||

"is_tools": true

|

||||

},

|

||||

{

|

||||

"llm_name": "kimi-k2-instruct",

|

||||

"tags": "LLM,CHAT,128K",

|

||||

"max_tokens": 128000,

|

||||

"model_type": "chat",

|

||||

"is_tools": true

|

||||

},

|

||||

{

|

||||

"llm_name": "deepseek-r1-0528",

|

||||

"tags": "LLM,CHAT,164k",

|

||||

"max_tokens": 164000,

|

||||

"model_type": "chat",

|

||||

"is_tools": true

|

||||

},

|

||||

{

|

||||

"llm_name": "qwen3-coder-480b",

|

||||

"tags": "LLM,CHAT,1024k",

|

||||

"max_tokens": 1024000,

|

||||

"model_type": "chat",

|

||||

"is_tools": true

|

||||

},

|

||||

{

|

||||

"llm_name": "glm-4.5",

|

||||

"tags": "LLM,CHAT,131K",

|

||||

"max_tokens": 131000,

|

||||

"model_type": "chat",

|

||||

"is_tools": true

|

||||

},

|

||||

{

|

||||

"llm_name": "deepseek-v3.1",

|

||||

"tags": "LLM,CHAT,128k",

|

||||

"max_tokens": 128000,

|

||||

"model_type": "chat",

|

||||

"is_tools": true

|

||||

}

|

||||

]

|

||||

},

|

||||

{

|

||||

"name": "Tongyi-Qianwen",

|

||||

"logo": "",

|

||||

@ -625,7 +689,7 @@

|

||||

},

|

||||

{

|

||||

"llm_name": "glm-4",

|

||||

"tags":"LLM,CHAT,128K",

|

||||

"tags": "LLM,CHAT,128K",

|

||||

"max_tokens": 128000,

|

||||

"model_type": "chat",

|

||||

"is_tools": true

|

||||

@ -4477,6 +4541,273 @@

|

||||

}

|

||||

]

|

||||

},

|

||||

{

|

||||

"name": "CometAPI",

|

||||

"logo": "",

|

||||

"tags": "LLM,TEXT EMBEDDING,IMAGE2TEXT",

|

||||

"status": "1",

|

||||

"llm": [

|

||||

{

|

||||

"llm_name": "gpt-5-chat-latest",

|

||||

"tags": "LLM,CHAT,400k",

|

||||

"max_tokens": 400000,

|

||||

"model_type": "chat",

|

||||

"is_tools": true

|

||||

},

|

||||

{

|

||||

"llm_name": "chatgpt-4o-latest",

|

||||

"tags": "LLM,CHAT,128k",

|

||||

"max_tokens": 128000,

|

||||

"model_type": "chat",

|

||||

"is_tools": true

|

||||

},

|

||||

{

|

||||

"llm_name": "gpt-5-mini",

|

||||

"tags": "LLM,CHAT,400k",

|

||||

"max_tokens": 400000,

|

||||

"model_type": "chat",

|

||||

"is_tools": true

|

||||

},

|

||||

{

|

||||

"llm_name": "gpt-5-nano",

|

||||

"tags": "LLM,CHAT,400k",

|

||||

"max_tokens": 400000,

|

||||

"model_type": "chat",

|

||||

"is_tools": true

|

||||

},

|

||||

{

|

||||

"llm_name": "gpt-5",

|

||||

"tags": "LLM,CHAT,400k",

|

||||

"max_tokens": 400000,

|

||||

"model_type": "chat",

|

||||

"is_tools": true

|

||||

},

|

||||

{

|

||||

"llm_name": "gpt-4.1-mini",

|

||||

"tags": "LLM,CHAT,1M",

|

||||

"max_tokens": 1047576,

|

||||

"model_type": "chat",

|

||||

"is_tools": true

|

||||

},

|

||||

{

|

||||

"llm_name": "gpt-4.1-nano",

|

||||

"tags": "LLM,CHAT,1M",

|

||||

"max_tokens": 1047576,

|

||||

"model_type": "chat",

|

||||

"is_tools": true

|

||||

},

|

||||

{

|

||||

"llm_name": "gpt-4.1",

|

||||

"tags": "LLM,CHAT,1M",

|

||||

"max_tokens": 1047576,

|

||||

"model_type": "chat",

|

||||

"is_tools": true

|

||||

},

|

||||

{

|

||||

"llm_name": "gpt-4o-mini",

|

||||

"tags": "LLM,CHAT,128k",

|

||||

"max_tokens": 128000,

|

||||

"model_type": "chat",

|

||||

"is_tools": true

|

||||

},

|

||||

{

|

||||

"llm_name": "o4-mini-2025-04-16",

|

||||

"tags": "LLM,CHAT,200k",

|

||||

"max_tokens": 200000,

|

||||

"model_type": "chat",

|

||||

"is_tools": true

|

||||

},

|

||||

{

|

||||

"llm_name": "o3-pro-2025-06-10",

|

||||

"tags": "LLM,CHAT,200k",

|

||||

"max_tokens": 200000,

|

||||

"model_type": "chat",

|

||||

"is_tools": true

|

||||

},

|

||||

{

|

||||

"llm_name": "claude-opus-4-1-20250805",

|

||||

"tags": "LLM,CHAT,200k,IMAGE2TEXT",

|

||||

"max_tokens": 200000,

|

||||

"model_type": "image2text",

|

||||

"is_tools": true

|

||||

},

|

||||

{

|

||||

"llm_name": "claude-opus-4-1-20250805-thinking",

|

||||

"tags": "LLM,CHAT,200k,IMAGE2TEXT",

|

||||

"max_tokens": 200000,

|

||||

"model_type": "image2text",

|

||||

"is_tools": true

|

||||

},

|

||||

{

|

||||

"llm_name": "claude-sonnet-4-20250514",

|

||||

"tags": "LLM,CHAT,200k,IMAGE2TEXT",

|

||||

"max_tokens": 200000,

|

||||

"model_type": "image2text",

|

||||

"is_tools": true

|

||||

},

|

||||

{

|

||||

"llm_name": "claude-sonnet-4-20250514-thinking",

|

||||

"tags": "LLM,CHAT,200k,IMAGE2TEXT",

|

||||

"max_tokens": 200000,

|

||||

"model_type": "image2text",

|

||||

"is_tools": true

|

||||

},

|

||||

{

|

||||

"llm_name": "claude-3-7-sonnet-latest",

|

||||

"tags": "LLM,CHAT,200k",

|

||||

"max_tokens": 200000,

|

||||

"model_type": "chat",

|

||||

"is_tools": true

|

||||

},

|

||||

{

|

||||

"llm_name": "claude-3-5-haiku-latest",

|

||||

"tags": "LLM,CHAT,200k",

|

||||

"max_tokens": 200000,

|

||||

"model_type": "chat",

|

||||

"is_tools": true

|

||||

},

|

||||

{

|

||||

"llm_name": "gemini-2.5-pro",

|

||||

"tags": "LLM,CHAT,1M,IMAGE2TEXT",

|

||||

"max_tokens": 1000000,

|

||||

"model_type": "image2text",

|

||||

"is_tools": true

|

||||

},

|

||||

{

|

||||

"llm_name": "gemini-2.5-flash",

|

||||

"tags": "LLM,CHAT,1M,IMAGE2TEXT",

|

||||

"max_tokens": 1000000,

|

||||

"model_type": "image2text",

|

||||

"is_tools": true

|

||||

},

|

||||

{

|

||||

"llm_name": "gemini-2.5-flash-lite",

|

||||

"tags": "LLM,CHAT,1M,IMAGE2TEXT",

|

||||

"max_tokens": 1000000,

|

||||

"model_type": "image2text",

|

||||

"is_tools": true

|

||||

},

|

||||

{

|

||||

"llm_name": "gemini-2.0-flash",

|

||||

"tags": "LLM,CHAT,1M,IMAGE2TEXT",

|

||||

"max_tokens": 1000000,

|

||||

"model_type": "image2text",

|

||||

"is_tools": true

|

||||

},

|

||||

{

|

||||

"llm_name": "grok-4-0709",

|

||||

"tags": "LLM,CHAT,131k",

|

||||

"max_tokens": 131072,

|

||||

"model_type": "chat",

|

||||

"is_tools": true

|

||||

},

|

||||

{

|

||||

"llm_name": "grok-3",

|

||||

"tags": "LLM,CHAT,131k",

|

||||

"max_tokens": 131072,

|

||||

"model_type": "chat",

|

||||

"is_tools": true

|

||||

},

|

||||

{

|

||||

"llm_name": "grok-3-mini",

|

||||

"tags": "LLM,CHAT,131k",

|

||||

"max_tokens": 131072,

|

||||

"model_type": "chat",

|

||||

"is_tools": true

|

||||

},

|

||||

{

|

||||

"llm_name": "grok-2-image-1212",

|

||||

"tags": "LLM,CHAT,32k,IMAGE2TEXT",

|

||||

"max_tokens": 32768,

|

||||

"model_type": "image2text",

|

||||

"is_tools": true

|

||||

},

|

||||

{

|

||||

"llm_name": "deepseek-v3.1",

|

||||

"tags": "LLM,CHAT,64k",

|

||||

"max_tokens": 64000,

|

||||

"model_type": "chat",

|

||||

"is_tools": true

|

||||

},

|

||||

{

|

||||

"llm_name": "deepseek-v3",

|

||||

"tags": "LLM,CHAT,64k",

|

||||

"max_tokens": 64000,

|

||||

"model_type": "chat",

|

||||

"is_tools": true

|

||||

},

|

||||

{

|

||||

"llm_name": "deepseek-r1-0528",

|

||||

"tags": "LLM,CHAT,164k",

|

||||

"max_tokens": 164000,

|

||||

"model_type": "chat",

|

||||

"is_tools": true

|

||||

},

|

||||

{

|

||||

"llm_name": "deepseek-chat",

|

||||

"tags": "LLM,CHAT,32k",

|

||||

"max_tokens": 32000,

|

||||

"model_type": "chat",

|

||||

"is_tools": true

|

||||

},

|

||||

{

|

||||

"llm_name": "deepseek-reasoner",

|

||||

"tags": "LLM,CHAT,64k",

|

||||

"max_tokens": 64000,

|

||||

"model_type": "chat",

|

||||

"is_tools": true

|

||||

},

|

||||

{

|

||||

"llm_name": "qwen3-30b-a3b",

|

||||

"tags": "LLM,CHAT,128k",

|

||||

"max_tokens": 128000,

|

||||

"model_type": "chat",

|

||||

"is_tools": true

|

||||

},

|

||||

{

|

||||

"llm_name": "qwen3-coder-plus-2025-07-22",

|

||||

"tags": "LLM,CHAT,128k",

|

||||

"max_tokens": 128000,

|

||||

"model_type": "chat",

|

||||

"is_tools": true

|

||||

},

|

||||

{

|

||||

"llm_name": "text-embedding-ada-002",

|

||||

"tags": "TEXT EMBEDDING,8K",

|

||||

"max_tokens": 8191,

|

||||

"model_type": "embedding",

|

||||

"is_tools": false

|

||||

},

|

||||

{

|

||||

"llm_name": "text-embedding-3-small",

|

||||

"tags": "TEXT EMBEDDING,8K",

|

||||

"max_tokens": 8191,

|

||||

"model_type": "embedding",

|

||||

"is_tools": false

|

||||

},

|

||||

{

|

||||

"llm_name": "text-embedding-3-large",

|

||||

"tags": "TEXT EMBEDDING,8K",

|

||||

"max_tokens": 8191,

|

||||

"model_type": "embedding",

|

||||

"is_tools": false

|

||||

},

|

||||

{

|

||||

"llm_name": "whisper-1",

|

||||

"tags": "SPEECH2TEXT",

|

||||

"max_tokens": 26214400,

|

||||

"model_type": "speech2text",

|

||||

"is_tools": false

|

||||

},

|

||||

{

|

||||

"llm_name": "tts-1",

|

||||

"tags": "TTS",

|

||||

"max_tokens": 2048,

|

||||

"model_type": "tts",

|

||||

"is_tools": false

|

||||

}

|

||||

]

|

||||

},

|

||||

{

|

||||

"name": "Meituan",

|

||||

"logo": "",

|

||||

@ -4493,4 +4824,4 @@

|

||||

]

|

||||

}

|

||||

]

|

||||

}

|

||||

}

|

||||

@ -37,7 +37,7 @@ TITLE_TAGS = {"h1": "#", "h2": "##", "h3": "###", "h4": "#####", "h5": "#####",

|

||||

|

||||

|

||||

class RAGFlowHtmlParser:

|

||||

def __call__(self, fnm, binary=None, chunk_token_num=None):

|

||||

def __call__(self, fnm, binary=None, chunk_token_num=512):

|

||||

if binary:

|

||||

encoding = find_codec(binary)

|

||||

txt = binary.decode(encoding, errors="ignore")

|

||||

|

||||

@ -34,7 +34,7 @@ from pypdf import PdfReader as pdf2_read

|

||||

|

||||

from api import settings

|

||||

from api.utils.file_utils import get_project_base_directory

|

||||

from deepdoc.vision import OCR, LayoutRecognizer, Recognizer, TableStructureRecognizer

|

||||

from deepdoc.vision import OCR, AscendLayoutRecognizer, LayoutRecognizer, Recognizer, TableStructureRecognizer

|

||||

from rag.app.picture import vision_llm_chunk as picture_vision_llm_chunk

|

||||

from rag.nlp import rag_tokenizer

|

||||

from rag.prompts import vision_llm_describe_prompt

|

||||

@ -64,33 +64,38 @@ class RAGFlowPdfParser:

|

||||

if PARALLEL_DEVICES > 1:

|

||||

self.parallel_limiter = [trio.CapacityLimiter(1) for _ in range(PARALLEL_DEVICES)]

|

||||

|

||||

layout_recognizer_type = os.getenv("LAYOUT_RECOGNIZER_TYPE", "onnx").lower()

|

||||

if layout_recognizer_type not in ["onnx", "ascend"]:

|

||||

raise RuntimeError("Unsupported layout recognizer type.")

|

||||

|

||||

if hasattr(self, "model_speciess"):

|

||||

self.layouter = LayoutRecognizer("layout." + self.model_speciess)

|

||||

recognizer_domain = "layout." + self.model_speciess

|

||||

else:

|

||||

self.layouter = LayoutRecognizer("layout")

|

||||

recognizer_domain = "layout"

|

||||

|

||||

if layout_recognizer_type == "ascend":

|

||||

logging.debug("Using Ascend LayoutRecognizer")

|

||||

self.layouter = AscendLayoutRecognizer(recognizer_domain)

|

||||

else: # onnx

|

||||

logging.debug("Using Onnx LayoutRecognizer")

|

||||

self.layouter = LayoutRecognizer(recognizer_domain)

|

||||

self.tbl_det = TableStructureRecognizer()

|

||||

|

||||

self.updown_cnt_mdl = xgb.Booster()

|

||||

if not settings.LIGHTEN:

|

||||

try:

|

||||

import torch.cuda

|

||||

|

||||

if torch.cuda.is_available():

|

||||

self.updown_cnt_mdl.set_param({"device": "cuda"})

|

||||

except Exception:

|

||||

logging.exception("RAGFlowPdfParser __init__")

|

||||

try:

|

||||

model_dir = os.path.join(

|

||||

get_project_base_directory(),

|

||||

"rag/res/deepdoc")

|

||||

self.updown_cnt_mdl.load_model(os.path.join(

|

||||

model_dir, "updown_concat_xgb.model"))

|

||||

model_dir = os.path.join(get_project_base_directory(), "rag/res/deepdoc")

|

||||

self.updown_cnt_mdl.load_model(os.path.join(model_dir, "updown_concat_xgb.model"))

|

||||

except Exception:

|

||||

model_dir = snapshot_download(

|

||||

repo_id="InfiniFlow/text_concat_xgb_v1.0",

|

||||

local_dir=os.path.join(get_project_base_directory(), "rag/res/deepdoc"),

|

||||

local_dir_use_symlinks=False)

|

||||

self.updown_cnt_mdl.load_model(os.path.join(

|

||||

model_dir, "updown_concat_xgb.model"))

|

||||

model_dir = snapshot_download(repo_id="InfiniFlow/text_concat_xgb_v1.0", local_dir=os.path.join(get_project_base_directory(), "rag/res/deepdoc"), local_dir_use_symlinks=False)

|

||||

self.updown_cnt_mdl.load_model(os.path.join(model_dir, "updown_concat_xgb.model"))

|

||||

|

||||

self.page_from = 0

|

||||

self.column_num = 1

|

||||

@ -102,13 +107,10 @@ class RAGFlowPdfParser:

|

||||

return c["bottom"] - c["top"]

|

||||

|

||||

def _x_dis(self, a, b):

|

||||

return min(abs(a["x1"] - b["x0"]), abs(a["x0"] - b["x1"]),

|

||||

abs(a["x0"] + a["x1"] - b["x0"] - b["x1"]) / 2)

|

||||

return min(abs(a["x1"] - b["x0"]), abs(a["x0"] - b["x1"]), abs(a["x0"] + a["x1"] - b["x0"] - b["x1"]) / 2)

|

||||

|

||||

def _y_dis(

|

||||

self, a, b):

|

||||

return (

|

||||

b["top"] + b["bottom"] - a["top"] - a["bottom"]) / 2

|

||||

def _y_dis(self, a, b):

|

||||

return (b["top"] + b["bottom"] - a["top"] - a["bottom"]) / 2

|

||||

|

||||

def _match_proj(self, b):

|

||||

proj_patt = [

|

||||

@ -130,10 +132,7 @@ class RAGFlowPdfParser:

|

||||

LEN = 6

|

||||

tks_down = rag_tokenizer.tokenize(down["text"][:LEN]).split()

|

||||

tks_up = rag_tokenizer.tokenize(up["text"][-LEN:]).split()

|

||||

tks_all = up["text"][-LEN:].strip() \

|

||||

+ (" " if re.match(r"[a-zA-Z0-9]+",

|

||||

up["text"][-1] + down["text"][0]) else "") \

|

||||

+ down["text"][:LEN].strip()

|

||||

tks_all = up["text"][-LEN:].strip() + (" " if re.match(r"[a-zA-Z0-9]+", up["text"][-1] + down["text"][0]) else "") + down["text"][:LEN].strip()

|

||||

tks_all = rag_tokenizer.tokenize(tks_all).split()

|

||||

fea = [

|

||||

up.get("R", -1) == down.get("R", -1),

|

||||

@ -144,39 +143,30 @@ class RAGFlowPdfParser:

|

||||

down["layout_type"] == "text",

|

||||

up["layout_type"] == "table",

|

||||

down["layout_type"] == "table",

|

||||

True if re.search(

|

||||

r"([。?!;!?;+))]|[a-z]\.)$",

|

||||

up["text"]) else False,

|

||||

True if re.search(r"([。?!;!?;+))]|[a-z]\.)$", up["text"]) else False,

|

||||

True if re.search(r"[,:‘“、0-9(+-]$", up["text"]) else False,

|

||||

True if re.search(

|

||||

r"(^.?[/,?;:\],。;:’”?!》】)-])",

|

||||

down["text"]) else False,

|

||||

True if re.search(r"(^.?[/,?;:\],。;:’”?!》】)-])", down["text"]) else False,

|

||||

True if re.match(r"[\((][^\(\)()]+[)\)]$", up["text"]) else False,

|

||||

True if re.search(r"[,,][^。.]+$", up["text"]) else False,

|

||||

True if re.search(r"[,,][^。.]+$", up["text"]) else False,

|

||||

True if re.search(r"[\((][^\))]+$", up["text"])

|

||||

and re.search(r"[\))]", down["text"]) else False,

|

||||

True if re.search(r"[\((][^\))]+$", up["text"]) and re.search(r"[\))]", down["text"]) else False,

|

||||

self._match_proj(down),

|

||||

True if re.match(r"[A-Z]", down["text"]) else False,

|

||||

True if re.match(r"[A-Z]", up["text"][-1]) else False,

|

||||

True if re.match(r"[a-z0-9]", up["text"][-1]) else False,

|

||||

True if re.match(r"[0-9.%,-]+$", down["text"]) else False,

|

||||

up["text"].strip()[-2:] == down["text"].strip()[-2:] if len(up["text"].strip()

|

||||

) > 1 and len(

|

||||

down["text"].strip()) > 1 else False,

|

||||

up["text"].strip()[-2:] == down["text"].strip()[-2:] if len(up["text"].strip()) > 1 and len(down["text"].strip()) > 1 else False,

|

||||

up["x0"] > down["x1"],

|

||||

abs(self.__height(up) - self.__height(down)) / min(self.__height(up),

|

||||

self.__height(down)),

|

||||

abs(self.__height(up) - self.__height(down)) / min(self.__height(up), self.__height(down)),

|

||||

self._x_dis(up, down) / max(w, 0.000001),

|

||||

(len(up["text"]) - len(down["text"])) /

|

||||

max(len(up["text"]), len(down["text"])),

|

||||

(len(up["text"]) - len(down["text"])) / max(len(up["text"]), len(down["text"])),

|

||||

len(tks_all) - len(tks_up) - len(tks_down),

|

||||

len(tks_down) - len(tks_up),

|

||||

tks_down[-1] == tks_up[-1] if tks_down and tks_up else False,

|

||||

max(down["in_row"], up["in_row"]),

|

||||

abs(down["in_row"] - up["in_row"]),

|

||||

len(tks_down) == 1 and rag_tokenizer.tag(tks_down[0]).find("n") >= 0,

|

||||

len(tks_up) == 1 and rag_tokenizer.tag(tks_up[0]).find("n") >= 0

|

||||

len(tks_up) == 1 and rag_tokenizer.tag(tks_up[0]).find("n") >= 0,

|

||||

]

|

||||

return fea

|

||||

|

||||

@ -187,9 +177,7 @@ class RAGFlowPdfParser:

|

||||

for i in range(len(arr) - 1):

|

||||

for j in range(i, -1, -1):

|

||||

# restore the order using th

|

||||

if abs(arr[j + 1]["x0"] - arr[j]["x0"]) < threshold \

|

||||

and arr[j + 1]["top"] < arr[j]["top"] \

|

||||

and arr[j + 1]["page_number"] == arr[j]["page_number"]:

|

||||

if abs(arr[j + 1]["x0"] - arr[j]["x0"]) < threshold and arr[j + 1]["top"] < arr[j]["top"] and arr[j + 1]["page_number"] == arr[j]["page_number"]:

|

||||

tmp = arr[j]

|

||||

arr[j] = arr[j + 1]

|

||||

arr[j + 1] = tmp

|

||||

@ -197,8 +185,7 @@ class RAGFlowPdfParser:

|

||||

|

||||

def _has_color(self, o):

|

||||

if o.get("ncs", "") == "DeviceGray":

|

||||

if o["stroking_color"] and o["stroking_color"][0] == 1 and o["non_stroking_color"] and \

|

||||

o["non_stroking_color"][0] == 1:

|

||||

if o["stroking_color"] and o["stroking_color"][0] == 1 and o["non_stroking_color"] and o["non_stroking_color"][0] == 1:

|

||||

if re.match(r"[a-zT_\[\]\(\)-]+", o.get("text", "")):

|

||||

return False

|

||||

return True

|

||||

@ -216,8 +203,7 @@ class RAGFlowPdfParser:

|

||||

if not tbls:

|

||||

continue

|

||||

for tb in tbls: # for table

|

||||

left, top, right, bott = tb["x0"] - MARGIN, tb["top"] - MARGIN, \

|

||||

tb["x1"] + MARGIN, tb["bottom"] + MARGIN

|

||||

left, top, right, bott = tb["x0"] - MARGIN, tb["top"] - MARGIN, tb["x1"] + MARGIN, tb["bottom"] + MARGIN

|

||||

left *= ZM

|

||||

top *= ZM

|

||||

right *= ZM

|

||||

@ -232,14 +218,13 @@ class RAGFlowPdfParser:

|

||||

tbcnt = np.cumsum(tbcnt)

|

||||

for i in range(len(tbcnt) - 1): # for page

|

||||

pg = []

|

||||

for j, tb_items in enumerate(

|

||||

recos[tbcnt[i]: tbcnt[i + 1]]): # for table

|

||||

poss = pos[tbcnt[i]: tbcnt[i + 1]]

|

||||

for j, tb_items in enumerate(recos[tbcnt[i] : tbcnt[i + 1]]): # for table

|

||||

poss = pos[tbcnt[i] : tbcnt[i + 1]]

|

||||

for it in tb_items: # for table components

|

||||

it["x0"] = (it["x0"] + poss[j][0])

|

||||

it["x1"] = (it["x1"] + poss[j][0])

|

||||

it["top"] = (it["top"] + poss[j][1])

|

||||

it["bottom"] = (it["bottom"] + poss[j][1])

|

||||

it["x0"] = it["x0"] + poss[j][0]

|

||||

it["x1"] = it["x1"] + poss[j][0]

|

||||

it["top"] = it["top"] + poss[j][1]

|

||||

it["bottom"] = it["bottom"] + poss[j][1]

|

||||

for n in ["x0", "x1", "top", "bottom"]:

|

||||

it[n] /= ZM

|

||||

it["top"] += self.page_cum_height[i]

|

||||

@ -250,8 +235,7 @@ class RAGFlowPdfParser:

|

||||

self.tb_cpns.extend(pg)

|

||||

|

||||

def gather(kwd, fzy=10, ption=0.6):

|

||||

eles = Recognizer.sort_Y_firstly(

|

||||

[r for r in self.tb_cpns if re.match(kwd, r["label"])], fzy)

|

||||

eles = Recognizer.sort_Y_firstly([r for r in self.tb_cpns if re.match(kwd, r["label"])], fzy)

|

||||

eles = Recognizer.layouts_cleanup(self.boxes, eles, 5, ption)

|

||||

return Recognizer.sort_Y_firstly(eles, 0)

|

||||

|

||||

@ -259,8 +243,7 @@ class RAGFlowPdfParser:

|

||||

headers = gather(r".*header$")

|

||||

rows = gather(r".* (row|header)")

|

||||

spans = gather(r".*spanning")

|

||||

clmns = sorted([r for r in self.tb_cpns if re.match(

|

||||

r"table column$", r["label"])], key=lambda x: (x["pn"], x["layoutno"], x["x0"]))

|

||||

clmns = sorted([r for r in self.tb_cpns if re.match(r"table column$", r["label"])], key=lambda x: (x["pn"], x["layoutno"], x["x0"]))

|

||||

clmns = Recognizer.layouts_cleanup(self.boxes, clmns, 5, 0.5)

|

||||

for b in self.boxes:

|

||||

if b.get("layout_type", "") != "table":

|

||||

@ -271,8 +254,7 @@ class RAGFlowPdfParser:

|

||||

b["R_top"] = rows[ii]["top"]

|

||||

b["R_bott"] = rows[ii]["bottom"]

|

||||

|

||||

ii = Recognizer.find_overlapped_with_threshold(

|

||||

b, headers, thr=0.3)

|

||||

ii = Recognizer.find_overlapped_with_threshold(b, headers, thr=0.3)

|

||||

if ii is not None:

|

||||

b["H_top"] = headers[ii]["top"]

|

||||

b["H_bott"] = headers[ii]["bottom"]

|

||||

@ -305,12 +287,12 @@ class RAGFlowPdfParser:

|

||||

return

|

||||

bxs = [(line[0], line[1][0]) for line in bxs]

|

||||

bxs = Recognizer.sort_Y_firstly(

|

||||

[{"x0": b[0][0] / ZM, "x1": b[1][0] / ZM,

|

||||

"top": b[0][1] / ZM, "text": "", "txt": t,

|

||||

"bottom": b[-1][1] / ZM,

|

||||

"chars": [],

|

||||

"page_number": pagenum} for b, t in bxs if b[0][0] <= b[1][0] and b[0][1] <= b[-1][1]],

|

||||

self.mean_height[pagenum-1] / 3

|

||||

[

|

||||

{"x0": b[0][0] / ZM, "x1": b[1][0] / ZM, "top": b[0][1] / ZM, "text": "", "txt": t, "bottom": b[-1][1] / ZM, "chars": [], "page_number": pagenum}

|

||||

for b, t in bxs

|

||||

if b[0][0] <= b[1][0] and b[0][1] <= b[-1][1]

|

||||

],

|

||||

self.mean_height[pagenum - 1] / 3,

|

||||

)

|

||||

|

||||

# merge chars in the same rect

|

||||

@ -321,7 +303,7 @@ class RAGFlowPdfParser:

|

||||

continue

|

||||

ch = c["bottom"] - c["top"]

|

||||

bh = bxs[ii]["bottom"] - bxs[ii]["top"]

|

||||

if abs(ch - bh) / max(ch, bh) >= 0.7 and c["text"] != ' ':

|

||||

if abs(ch - bh) / max(ch, bh) >= 0.7 and c["text"] != " ":

|

||||

self.lefted_chars.append(c)

|

||||

continue

|

||||

bxs[ii]["chars"].append(c)

|

||||

@ -345,8 +327,7 @@ class RAGFlowPdfParser:

|

||||

img_np = np.array(img)

|

||||

for b in bxs:

|

||||

if not b["text"]:

|

||||

left, right, top, bott = b["x0"] * ZM, b["x1"] * \

|

||||

ZM, b["top"] * ZM, b["bottom"] * ZM

|

||||

left, right, top, bott = b["x0"] * ZM, b["x1"] * ZM, b["top"] * ZM, b["bottom"] * ZM

|

||||

b["box_image"] = self.ocr.get_rotate_crop_image(img_np, np.array([[left, top], [right, top], [right, bott], [left, bott]], dtype=np.float32))

|

||||

boxes_to_reg.append(b)

|

||||

del b["txt"]

|

||||

@ -356,21 +337,17 @@ class RAGFlowPdfParser:

|

||||

del boxes_to_reg[i]["box_image"]

|

||||

logging.info(f"__ocr recognize {len(bxs)} boxes cost {timer() - start}s")

|

||||

bxs = [b for b in bxs if b["text"]]

|

||||

if self.mean_height[pagenum-1] == 0:

|

||||

self.mean_height[pagenum-1] = np.median([b["bottom"] - b["top"]

|

||||

for b in bxs])

|

||||

if self.mean_height[pagenum - 1] == 0:

|

||||

self.mean_height[pagenum - 1] = np.median([b["bottom"] - b["top"] for b in bxs])

|

||||

self.boxes.append(bxs)

|

||||

|

||||

def _layouts_rec(self, ZM, drop=True):

|

||||

assert len(self.page_images) == len(self.boxes)

|

||||

self.boxes, self.page_layout = self.layouter(

|

||||

self.page_images, self.boxes, ZM, drop=drop)

|

||||

self.boxes, self.page_layout = self.layouter(self.page_images, self.boxes, ZM, drop=drop)

|

||||

# cumlative Y

|

||||

for i in range(len(self.boxes)):

|

||||

self.boxes[i]["top"] += \

|

||||

self.page_cum_height[self.boxes[i]["page_number"] - 1]

|

||||

self.boxes[i]["bottom"] += \

|

||||

self.page_cum_height[self.boxes[i]["page_number"] - 1]

|

||||

self.boxes[i]["top"] += self.page_cum_height[self.boxes[i]["page_number"] - 1]

|

||||

self.boxes[i]["bottom"] += self.page_cum_height[self.boxes[i]["page_number"] - 1]

|

||||

|

||||

def _text_merge(self):

|

||||

# merge adjusted boxes

|

||||

@ -390,12 +367,10 @@ class RAGFlowPdfParser:

|

||||

while i < len(bxs) - 1:

|

||||

b = bxs[i]

|

||||

b_ = bxs[i + 1]

|

||||

if b.get("layoutno", "0") != b_.get("layoutno", "1") or b.get("layout_type", "") in ["table", "figure",

|

||||

"equation"]:

|

||||

if b.get("layoutno", "0") != b_.get("layoutno", "1") or b.get("layout_type", "") in ["table", "figure", "equation"]:

|

||||

i += 1

|

||||

continue

|

||||

if abs(self._y_dis(b, b_)

|

||||

) < self.mean_height[bxs[i]["page_number"] - 1] / 3:

|

||||

if abs(self._y_dis(b, b_)) < self.mean_height[bxs[i]["page_number"] - 1] / 3:

|

||||

# merge

|

||||

bxs[i]["x1"] = b_["x1"]

|

||||

bxs[i]["top"] = (b["top"] + b_["top"]) / 2

|

||||

@ -408,16 +383,14 @@ class RAGFlowPdfParser:

|

||||

|

||||

dis_thr = 1

|

||||

dis = b["x1"] - b_["x0"]

|

||||

if b.get("layout_type", "") != "text" or b_.get(

|

||||

"layout_type", "") != "text":

|

||||

if b.get("layout_type", "") != "text" or b_.get("layout_type", "") != "text":

|

||||

if end_with(b, ",") or start_with(b_, "(,"):

|

||||

dis_thr = -8

|

||||

else:

|

||||

i += 1

|

||||

continue

|

||||

|

||||

if abs(self._y_dis(b, b_)) < self.mean_height[bxs[i]["page_number"] - 1] / 5 \

|

||||

and dis >= dis_thr and b["x1"] < b_["x1"]:

|

||||

if abs(self._y_dis(b, b_)) < self.mean_height[bxs[i]["page_number"] - 1] / 5 and dis >= dis_thr and b["x1"] < b_["x1"]:

|

||||

# merge

|

||||

bxs[i]["x1"] = b_["x1"]

|

||||

bxs[i]["top"] = (b["top"] + b_["top"]) / 2

|

||||

@ -429,23 +402,22 @@ class RAGFlowPdfParser:

|

||||

self.boxes = bxs

|

||||

|

||||

def _naive_vertical_merge(self, zoomin=3):

|

||||

bxs = Recognizer.sort_Y_firstly(

|

||||

self.boxes, np.median(

|

||||

self.mean_height) / 3)

|

||||

import math

|

||||

bxs = Recognizer.sort_Y_firstly(self.boxes, np.median(self.mean_height) / 3)

|

||||

|

||||

column_width = np.median([b["x1"] - b["x0"] for b in self.boxes])

|

||||

if not column_width or math.isnan(column_width):

|

||||

column_width = self.mean_width[0]

|

||||

self.column_num = int(self.page_images[0].size[0] / zoomin / column_width)

|

||||

if column_width < self.page_images[0].size[0] / zoomin / self.column_num:

|

||||

logging.info("Multi-column................... {} {}".format(column_width,

|

||||

self.page_images[0].size[0] / zoomin / self.column_num))

|

||||

logging.info("Multi-column................... {} {}".format(column_width, self.page_images[0].size[0] / zoomin / self.column_num))

|

||||

self.boxes = self.sort_X_by_page(self.boxes, column_width / self.column_num)

|

||||

|

||||

i = 0

|

||||

while i + 1 < len(bxs):

|

||||

b = bxs[i]

|

||||

b_ = bxs[i + 1]

|

||||

if b["page_number"] < b_["page_number"] and re.match(

|

||||

r"[0-9 •一—-]+$", b["text"]):

|

||||

if b["page_number"] < b_["page_number"] and re.match(r"[0-9 •一—-]+$", b["text"]):

|

||||

bxs.pop(i)

|

||||

continue

|

||||

if not b["text"].strip():

|

||||

@ -453,8 +425,7 @@ class RAGFlowPdfParser:

|

||||

continue

|

||||

concatting_feats = [

|

||||

b["text"].strip()[-1] in ",;:'\",、‘“;:-",

|

||||

len(b["text"].strip()) > 1 and b["text"].strip(

|

||||

)[-2] in ",;:'\",‘“、;:",

|

||||

len(b["text"].strip()) > 1 and b["text"].strip()[-2] in ",;:'\",‘“、;:",

|

||||

b_["text"].strip() and b_["text"].strip()[0] in "。;?!?”)),,、:",

|

||||

]

|

||||

# features for not concating

|

||||

@ -462,21 +433,20 @@ class RAGFlowPdfParser:

|

||||

b.get("layoutno", 0) != b_.get("layoutno", 0),

|

||||

b["text"].strip()[-1] in "。?!?",

|

||||

self.is_english and b["text"].strip()[-1] in ".!?",

|

||||

b["page_number"] == b_["page_number"] and b_["top"] -

|

||||

b["bottom"] > self.mean_height[b["page_number"] - 1] * 1.5,

|

||||

b["page_number"] < b_["page_number"] and abs(

|

||||

b["x0"] - b_["x0"]) > self.mean_width[b["page_number"] - 1] * 4,

|

||||

b["page_number"] == b_["page_number"] and b_["top"] - b["bottom"] > self.mean_height[b["page_number"] - 1] * 1.5,

|

||||

b["page_number"] < b_["page_number"] and abs(b["x0"] - b_["x0"]) > self.mean_width[b["page_number"] - 1] * 4,

|

||||

]

|

||||

# split features

|

||||

detach_feats = [b["x1"] < b_["x0"],

|

||||

b["x0"] > b_["x1"]]

|

||||

detach_feats = [b["x1"] < b_["x0"], b["x0"] > b_["x1"]]

|

||||

if (any(feats) and not any(concatting_feats)) or any(detach_feats):

|

||||

logging.debug("{} {} {} {}".format(

|

||||

b["text"],

|

||||

b_["text"],

|

||||

any(feats),

|

||||

any(concatting_feats),

|

||||

))

|

||||

logging.debug(

|

||||

"{} {} {} {}".format(

|

||||

b["text"],

|

||||

b_["text"],

|

||||

any(feats),

|

||||

any(concatting_feats),

|

||||

)

|

||||

)

|

||||

i += 1

|

||||

continue

|

||||

# merge up and down

|

||||

@ -529,14 +499,11 @@ class RAGFlowPdfParser:

|

||||

if not concat_between_pages and down["page_number"] > up["page_number"]:

|

||||

break

|

||||

|

||||

if up.get("R", "") != down.get(

|

||||

"R", "") and up["text"][-1] != ",":

|

||||

if up.get("R", "") != down.get("R", "") and up["text"][-1] != ",":

|

||||

i += 1

|

||||

continue

|

||||

|

||||

if re.match(r"[0-9]{2,3}/[0-9]{3}$", up["text"]) \

|

||||

or re.match(r"[0-9]{2,3}/[0-9]{3}$", down["text"]) \

|

||||

or not down["text"].strip():

|

||||

if re.match(r"[0-9]{2,3}/[0-9]{3}$", up["text"]) or re.match(r"[0-9]{2,3}/[0-9]{3}$", down["text"]) or not down["text"].strip():

|

||||

i += 1

|

||||

continue

|

||||

|

||||

@ -544,14 +511,12 @@ class RAGFlowPdfParser:

|

||||

i += 1

|

||||

continue

|

||||

|

||||

if up["x1"] < down["x0"] - 10 * \

|

||||

mw or up["x0"] > down["x1"] + 10 * mw:

|

||||

if up["x1"] < down["x0"] - 10 * mw or up["x0"] > down["x1"] + 10 * mw:

|

||||

i += 1

|

||||

continue

|

||||

|

||||

if i - dp < 5 and up.get("layout_type") == "text":

|

||||

if up.get("layoutno", "1") == down.get(

|

||||

"layoutno", "2"):

|

||||

if up.get("layoutno", "1") == down.get("layoutno", "2"):

|

||||

dfs(down, i + 1)

|

||||

boxes.pop(i)

|

||||

return

|

||||

@ -559,8 +524,7 @@ class RAGFlowPdfParser:

|

||||

continue

|

||||

|

||||

fea = self._updown_concat_features(up, down)

|

||||

if self.updown_cnt_mdl.predict(

|

||||

xgb.DMatrix([fea]))[0] <= 0.5:

|

||||

if self.updown_cnt_mdl.predict(xgb.DMatrix([fea]))[0] <= 0.5:

|

||||

i += 1

|

||||

continue

|

||||

dfs(down, i + 1)

|

||||

@ -584,16 +548,14 @@ class RAGFlowPdfParser:

|

||||

c["text"] = c["text"].strip()

|

||||

if not c["text"]:

|

||||

continue

|

||||

if t["text"] and re.match(

|

||||

r"[0-9\.a-zA-Z]+$", t["text"][-1] + c["text"][-1]):

|

||||

if t["text"] and re.match(r"[0-9\.a-zA-Z]+$", t["text"][-1] + c["text"][-1]):

|

||||

t["text"] += " "

|

||||

t["text"] += c["text"]

|

||||

t["x0"] = min(t["x0"], c["x0"])

|

||||

t["x1"] = max(t["x1"], c["x1"])

|

||||

t["page_number"] = min(t["page_number"], c["page_number"])

|

||||

t["bottom"] = c["bottom"]

|

||||

if not t["layout_type"] \

|

||||

and c["layout_type"]:

|

||||

if not t["layout_type"] and c["layout_type"]:

|

||||

t["layout_type"] = c["layout_type"]

|

||||

boxes.append(t)

|

||||

|

||||

@ -605,25 +567,20 @@ class RAGFlowPdfParser:

|

||||

findit = False

|

||||

i = 0

|

||||

while i < len(self.boxes):

|

||||

if not re.match(r"(contents|目录|目次|table of contents|致谢|acknowledge)$",

|

||||

re.sub(r"( | |\u3000)+", "", self.boxes[i]["text"].lower())):

|

||||

if not re.match(r"(contents|目录|目次|table of contents|致谢|acknowledge)$", re.sub(r"( | |\u3000)+", "", self.boxes[i]["text"].lower())):

|

||||

i += 1

|

||||

continue

|

||||

findit = True

|

||||

eng = re.match(

|

||||

r"[0-9a-zA-Z :'.-]{5,}",

|

||||

self.boxes[i]["text"].strip())

|

||||

eng = re.match(r"[0-9a-zA-Z :'.-]{5,}", self.boxes[i]["text"].strip())

|

||||

self.boxes.pop(i)

|

||||

if i >= len(self.boxes):

|

||||

break

|

||||

prefix = self.boxes[i]["text"].strip()[:3] if not eng else " ".join(

|

||||

self.boxes[i]["text"].strip().split()[:2])

|

||||

prefix = self.boxes[i]["text"].strip()[:3] if not eng else " ".join(self.boxes[i]["text"].strip().split()[:2])

|

||||

while not prefix:

|

||||

self.boxes.pop(i)

|

||||

if i >= len(self.boxes):

|

||||

break

|

||||

prefix = self.boxes[i]["text"].strip()[:3] if not eng else " ".join(

|

||||

self.boxes[i]["text"].strip().split()[:2])

|

||||

prefix = self.boxes[i]["text"].strip()[:3] if not eng else " ".join(self.boxes[i]["text"].strip().split()[:2])

|

||||

self.boxes.pop(i)

|

||||

if i >= len(self.boxes) or not prefix:

|

||||

break

|

||||

@ -662,10 +619,12 @@ class RAGFlowPdfParser:

|

||||

self.boxes.pop(i + 1)

|

||||

continue

|

||||

|

||||

if b["text"].strip()[0] != b_["text"].strip()[0] \

|

||||

or b["text"].strip()[0].lower() in set("qwertyuopasdfghjklzxcvbnm") \

|

||||

or rag_tokenizer.is_chinese(b["text"].strip()[0]) \

|

||||

or b["top"] > b_["bottom"]:

|

||||

if (

|

||||

b["text"].strip()[0] != b_["text"].strip()[0]

|

||||

or b["text"].strip()[0].lower() in set("qwertyuopasdfghjklzxcvbnm")

|

||||

or rag_tokenizer.is_chinese(b["text"].strip()[0])

|

||||

or b["top"] > b_["bottom"]

|

||||

):

|

||||

i += 1

|

||||

continue

|

||||

b_["text"] = b["text"] + "\n" + b_["text"]

|

||||

@ -685,12 +644,8 @@ class RAGFlowPdfParser:

|

||||

if "layoutno" not in self.boxes[i]:

|

||||

i += 1

|

||||

continue

|

||||

lout_no = str(self.boxes[i]["page_number"]) + \

|

||||

"-" + str(self.boxes[i]["layoutno"])

|

||||

if TableStructureRecognizer.is_caption(self.boxes[i]) or self.boxes[i]["layout_type"] in ["table caption",

|

||||

"title",

|

||||

"figure caption",

|

||||

"reference"]:

|

||||

lout_no = str(self.boxes[i]["page_number"]) + "-" + str(self.boxes[i]["layoutno"])

|

||||