mirror of

https://github.com/infiniflow/ragflow.git

synced 2026-01-30 23:26:36 +08:00

Doc: miscellaneous (#10641)

### What problem does this PR solve? ### Type of change - [x] Documentation Update

This commit is contained in:

@ -9,7 +9,7 @@ The component equipped with reasoning, tool usage, and multi-agent collaboration

|

||||

|

||||

---

|

||||

|

||||

An **Agent** component fine-tunes the LLM and sets its prompt. From v0.21.0 onwards, an **Agent** component is able to work independently and with the following capabilities:

|

||||

An **Agent** component fine-tunes the LLM and sets its prompt. From v0.20.5 onwards, an **Agent** component is able to work independently and with the following capabilities:

|

||||

|

||||

- Autonomous reasoning with reflection and adjustment based on environmental feedback.

|

||||

- Use of tools or subagents to complete tasks.

|

||||

@ -24,7 +24,7 @@ An **Agent** component is essential when you need the LLM to assist with summari

|

||||

|

||||

|

||||

|

||||

2. If your Agent involves dataset retrieval, ensure you [have properly configured your target knowledge base(s)](../../dataset/configure_knowledge_base.md).

|

||||

2. If your Agent involves dataset retrieval, ensure you [have properly configured your target dataset(s)](../../dataset/configure_knowledge_base.md).

|

||||

|

||||

## Quickstart

|

||||

|

||||

@ -113,7 +113,7 @@ Click the dropdown menu of **Model** to show the model configuration window.

|

||||

- **Model**: The chat model to use.

|

||||

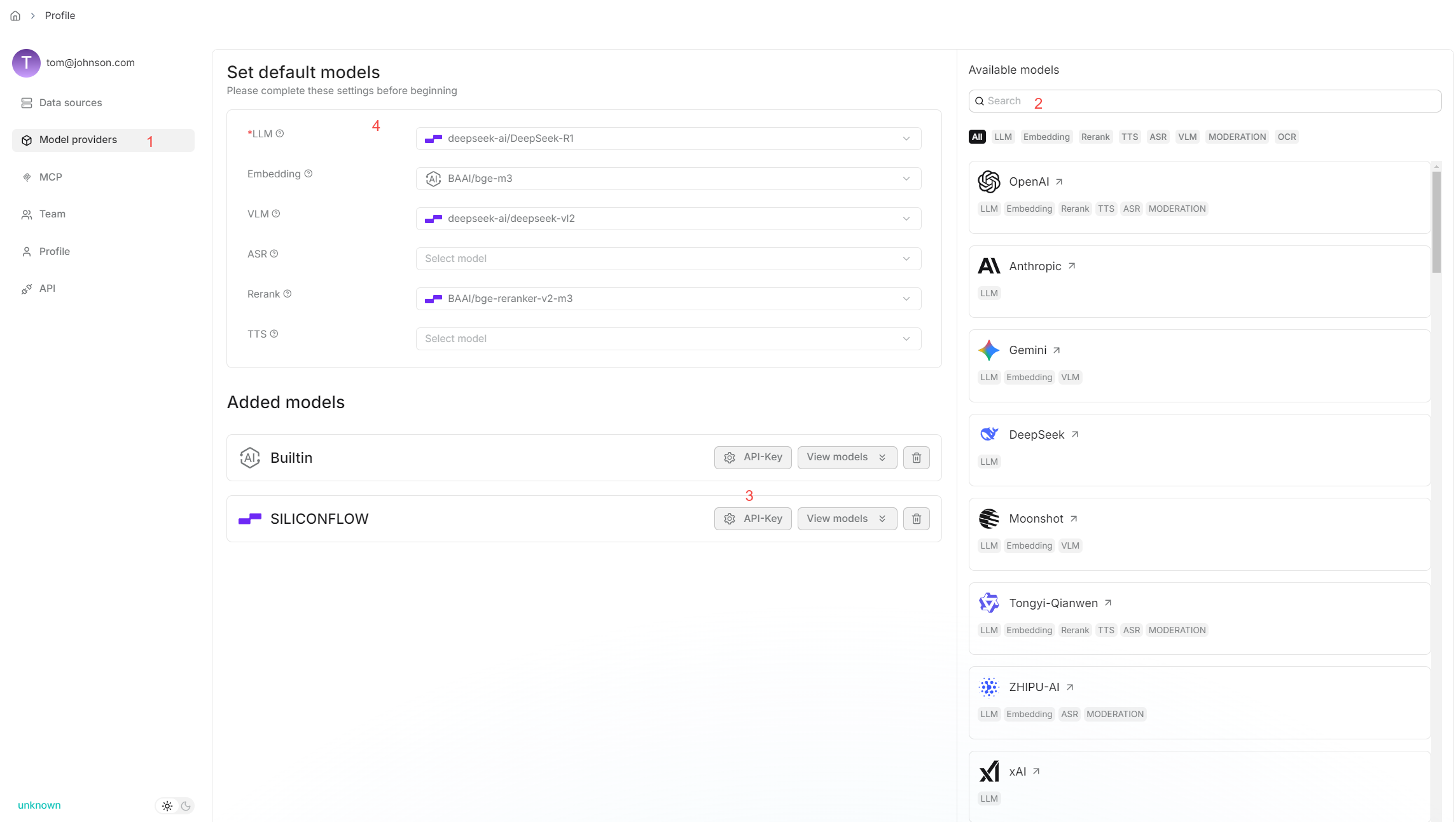

- Ensure you set the chat model correctly on the **Model providers** page.

|

||||

- You can use different models for different components to increase flexibility or improve overall performance.

|

||||

- **Freedom**: A shortcut to **Temperature**, **Top P**, **Presence penalty**, and **Frequency penalty** settings, indicating the freedom level of the model. From **Improvise**, **Precise**, to **Balance**, each preset configuration corresponds to a unique combination of **Temperature**, **Top P**, **Presence penalty**, and **Frequency penalty**.

|

||||

- **Creavity**: A shortcut to **Temperature**, **Top P**, **Presence penalty**, and **Frequency penalty** settings, indicating the freedom level of the model. From **Improvise**, **Precise**, to **Balance**, each preset configuration corresponds to a unique combination of **Temperature**, **Top P**, **Presence penalty**, and **Frequency penalty**.

|

||||

This parameter has three options:

|

||||

- **Improvise**: Produces more creative responses.

|

||||

- **Precise**: (Default) Produces more conservative responses.

|

||||

@ -132,11 +132,12 @@ Click the dropdown menu of **Model** to show the model configuration window.

|

||||

- **Frequency penalty**: Discourages the model from repeating the same words or phrases too frequently in the generated text.

|

||||

- A higher **frequency penalty** value results in the model being more conservative in its use of repeated tokens.

|

||||

- Defaults to 0.7.

|

||||

- **Max tokens**:

|

||||

- **Max tokens**:

|

||||

This sets the maximum length of the model's output, measured in the number of tokens (words or pieces of words). It is disabled by default, allowing the model to determine the number of tokens in its responses.

|

||||

|

||||

:::tip NOTE

|

||||

- It is not necessary to stick with the same model for all components. If a specific model is not performing well for a particular task, consider using a different one.

|

||||

- If you are uncertain about the mechanism behind **Temperature**, **Top P**, **Presence penalty**, and **Frequency penalty**, simply choose one of the three options of **Preset configurations**.

|

||||

- If you are uncertain about the mechanism behind **Temperature**, **Top P**, **Presence penalty**, and **Frequency penalty**, simply choose one of the three options of **Creavity**.

|

||||

:::

|

||||

|

||||

### System prompt

|

||||

@ -147,7 +148,7 @@ An **Agent** component relies on keys (variables) to specify its data inputs. It

|

||||

|

||||

#### Advanced usage

|

||||

|

||||

From v0.21.0 onwards, four framework-level prompt blocks are available in the **System prompt** field, enabling you to customize and *override* prompts at the framework level. Type `/` or click **(x)** to view them; they appear under the **Framework** entry in the dropdown menu.

|

||||

From v0.20.5 onwards, four framework-level prompt blocks are available in the **System prompt** field, enabling you to customize and *override* prompts at the framework level. Type `/` or click **(x)** to view them; they appear under the **Framework** entry in the dropdown menu.

|

||||

|

||||

- `task_analysis` prompt block

|

||||

- This block is responsible for analyzing tasks — either a user task or a task assigned by the lead Agent when the **Agent** component is acting as a Sub-Agent.

|

||||

|

||||

@ -42,7 +42,7 @@ Click the dropdown menu of **Model** to show the model configuration window.

|

||||

- **Model**: The chat model to use.

|

||||

- Ensure you set the chat model correctly on the **Model providers** page.

|

||||

- You can use different models for different components to increase flexibility or improve overall performance.

|

||||

- **Freedom**: A shortcut to **Temperature**, **Top P**, **Presence penalty**, and **Frequency penalty** settings, indicating the freedom level of the model. From **Improvise**, **Precise**, to **Balance**, each preset configuration corresponds to a unique combination of **Temperature**, **Top P**, **Presence penalty**, and **Frequency penalty**.

|

||||

- **Creavity**: A shortcut to **Temperature**, **Top P**, **Presence penalty**, and **Frequency penalty** settings, indicating the freedom level of the model. From **Improvise**, **Precise**, to **Balance**, each preset configuration corresponds to a unique combination of **Temperature**, **Top P**, **Presence penalty**, and **Frequency penalty**.

|

||||

This parameter has three options:

|

||||

- **Improvise**: Produces more creative responses.

|

||||

- **Precise**: (Default) Produces more conservative responses.

|

||||

@ -61,10 +61,12 @@ Click the dropdown menu of **Model** to show the model configuration window.

|

||||

- **Frequency penalty**: Discourages the model from repeating the same words or phrases too frequently in the generated text.

|

||||

- A higher **frequency penalty** value results in the model being more conservative in its use of repeated tokens.

|

||||

- Defaults to 0.7.

|

||||

- **Max tokens**:

|

||||

This sets the maximum length of the model's output, measured in the number of tokens (words or pieces of words). It is disabled by default, allowing the model to determine the number of tokens in its responses.

|

||||

|

||||

:::tip NOTE

|

||||

- It is not necessary to stick with the same model for all components. If a specific model is not performing well for a particular task, consider using a different one.

|

||||

- If you are uncertain about the mechanism behind **Temperature**, **Top P**, **Presence penalty**, and **Frequency penalty**, simply choose one of the three options of **Preset configurations**.

|

||||

- If you are uncertain about the mechanism behind **Temperature**, **Top P**, **Presence penalty**, and **Frequency penalty**, simply choose one of the three options of **Creavity**.

|

||||

:::

|

||||

|

||||

### Message window size

|

||||

|

||||

29

docs/guides/agent/agent_component_reference/indexer.md

Normal file

29

docs/guides/agent/agent_component_reference/indexer.md

Normal file

@ -0,0 +1,29 @@

|

||||

---

|

||||

sidebar_position: 40

|

||||

slug: /indexer_component

|

||||

---

|

||||

|

||||

# Indexer component

|

||||

|

||||

A component that defines how chunks are indexed.

|

||||

|

||||

---

|

||||

|

||||

An **Indexer** component indexes chunks and configures their storage formats in the document engine.

|

||||

|

||||

## Scenario

|

||||

|

||||

An **Indexer** component is the mandatory ending component for all ingestion pipelines.

|

||||

|

||||

## Configurations

|

||||

|

||||

### Search method

|

||||

|

||||

This setting configures how chunks are stored in the document engine: as full-text, embeddings, or both.

|

||||

|

||||

### Filename embedding weight

|

||||

|

||||

This setting defines the filename's contribution to the final embedding, which is a weighted combination of both the chunk content and the filename. Essentially, a higher value gives the filename more influence in the final *composite* embedding.

|

||||

|

||||

- 0.1: Filename contributes 10% (chunk content 90%)

|

||||

- 0.5 (maximum): Filename contributes 50% (chunk content 90%)

|

||||

@ -87,9 +87,9 @@ RAGFlow employs a combination of weighted keyword similarity and weighted vector

|

||||

|

||||

Defaults to 0.2.

|

||||

|

||||

### Keyword similarity weight

|

||||

### Vector similarity weight

|

||||

|

||||

This parameter sets the weight of keyword similarity in the combined similarity score. The total of the two weights must equal 1.0. Its default value is 0.7, which means the weight of vector similarity in the combined search is 1 - 0.7 = 0.3.

|

||||

This parameter sets the weight of vector similarity in the composite similarity score. The total of the two weights must equal 1.0. Its default value is 0.3, which means the weight of keyword similarity in a combined search is 1 - 0.3 = 0.7.

|

||||

|

||||

### Top N

|

||||

|

||||

|

||||

80

docs/guides/agent/agent_component_reference/transformer.md

Normal file

80

docs/guides/agent/agent_component_reference/transformer.md

Normal file

@ -0,0 +1,80 @@

|

||||

---

|

||||

sidebar_position: 37

|

||||

slug: /transformer_component

|

||||

---

|

||||

|

||||

# Transformer component

|

||||

|

||||

A component that uses an LLM to extract insights from the chunks.

|

||||

|

||||

---

|

||||

|

||||

A **Transformer** component indexes chunks and configures their storage formats in the document engine. It *typically* precedes the **Indexer** in the ingestion pipeline, but you can also chain multiple **Transformer** components in sequence.

|

||||

|

||||

## Scenario

|

||||

|

||||

A **Transformer** component is essential when you need the LLM to extract new information, such as keywords, questions, metadata, and summaries, from the original chunks.

|

||||

|

||||

## Configurations

|

||||

|

||||

### Model

|

||||

|

||||

Click the dropdown menu of **Model** to show the model configuration window.

|

||||

|

||||

- **Model**: The chat model to use.

|

||||

- Ensure you set the chat model correctly on the **Model providers** page.

|

||||

- You can use different models for different components to increase flexibility or improve overall performance.

|

||||

- **Creavity**: A shortcut to **Temperature**, **Top P**, **Presence penalty**, and **Frequency penalty** settings, indicating the freedom level of the model. From **Improvise**, **Precise**, to **Balance**, each preset configuration corresponds to a unique combination of **Temperature**, **Top P**, **Presence penalty**, and **Frequency penalty**.

|

||||

This parameter has three options:

|

||||

- **Improvise**: Produces more creative responses.

|

||||

- **Precise**: (Default) Produces more conservative responses.

|

||||

- **Balance**: A middle ground between **Improvise** and **Precise**.

|

||||

- **Temperature**: The randomness level of the model's output.

|

||||

Defaults to 0.1.

|

||||

- Lower values lead to more deterministic and predictable outputs.

|

||||

- Higher values lead to more creative and varied outputs.

|

||||

- A temperature of zero results in the same output for the same prompt.

|

||||

- **Top P**: Nucleus sampling.

|

||||

- Reduces the likelihood of generating repetitive or unnatural text by setting a threshold *P* and restricting the sampling to tokens with a cumulative probability exceeding *P*.

|

||||

- Defaults to 0.3.

|

||||

- **Presence penalty**: Encourages the model to include a more diverse range of tokens in the response.

|

||||

- A higher **presence penalty** value results in the model being more likely to generate tokens not yet been included in the generated text.

|

||||

- Defaults to 0.4.

|

||||

- **Frequency penalty**: Discourages the model from repeating the same words or phrases too frequently in the generated text.

|

||||

- A higher **frequency penalty** value results in the model being more conservative in its use of repeated tokens.

|

||||

- Defaults to 0.7.

|

||||

- **Max tokens**:

|

||||

This sets the maximum length of the model's output, measured in the number of tokens (words or pieces of words). It is disabled by default, allowing the model to determine the number of tokens in its responses.

|

||||

|

||||

:::tip NOTE

|

||||

- It is not necessary to stick with the same model for all components. If a specific model is not performing well for a particular task, consider using a different one.

|

||||

- If you are uncertain about the mechanism behind **Temperature**, **Top P**, **Presence penalty**, and **Frequency penalty**, simply choose one of the three options of **Creativity**.

|

||||

:::

|

||||

|

||||

### Result destination

|

||||

|

||||

Select the type of output to be generated by the LLM:

|

||||

|

||||

- Summary

|

||||

- Keywords

|

||||

- Questions

|

||||

- Metadata

|

||||

|

||||

### System prompt

|

||||

|

||||

Typically, you use the system prompt to describe the task for the LLM, specify how it should respond, and outline other miscellaneous requirements. We do not plan to elaborate on this topic, as it can be as extensive as prompt engineering.

|

||||

|

||||

:::tip NOTE

|

||||

The system prompt here automatically updates to match your selected **Result destination**.

|

||||

:::

|

||||

|

||||

### User prompt

|

||||

|

||||

The user-defined prompt. For example, you can type `/` or click **(x)** to insert variables of preceding components in the ingestion pipeline as the LLM's input.

|

||||

|

||||

### Output

|

||||

|

||||

The global variable name for the output of the **Transformer** component, which can be referenced by subsequent **Transformer** components in the ingestion pipeline.

|

||||

|

||||

- Default: `chunks`

|

||||

- Type: `Array<Object>`

|

||||

Reference in New Issue

Block a user